B9) Time-objects

When BP3 goes beyond music

Beyond notes

A tabla player does not think in isolated notes. When he pronounces tirakita, he does not think of four separate strokes — he thinks of a word, a unique gesture in a rhythmic language. What if our formalism could reason in the same way?

Where does this article fit in?

This article explores a dimension of BP3 often overlooked: its ability to manipulate time-objects, i.e., any entities possessing temporal properties. B5 showed how BP3 compresses and superimposes streams; B7 how code is translated to SuperCollider. Here, we see that the grammar’s terminals are not necessarily MIDI notes — they can be synthesis instructions, audio fragments, or even commands for a robot.

Why is this important?

Most computer music languages are built around the note: an event with a pitch, duration, and velocity. This is the MIDI model, which has dominated since 1983. Even the most sophisticated systems — OpenMusic, PWGL, Bach for Max — remain fundamentally note manipulators.

BP3 operates at a higher level of abstraction. For the grammar engine, it doesn’t matter whether the terminal is a C4 at velocity 80 or a Csound synthesis instruction with 200 parameters. What matters are the object’s temporal properties: its duration, its ability to be compressed or truncated, its synchronization points. The content is opaque — only the temporal form is manipulated by the grammar.

This is the difference between writing with words and writing with letters. A MIDI sequencer writes letter by letter (NoteOn, NoteOff, NoteOn…). BP3 writes with words — units of meaning with their own properties — and organizes them by grammar.

The idea in one sentence

A time-object is any entity possessing a duration and temporal properties, which the BP3 grammar can organize without knowing its content — making BP3 not a note sequencer, but a universal time-setting engine.

Let’s explain step by step

1. What is a time-object?

A time-object is the elementary building block of BP3. It is a sequence of messages — MIDI, Csound, or other — having a duration in time.

The simplest form is a note: a NoteOn/NoteOff pair on a MIDI channel. But a time-object can also be:

- A rhythmic word composed of several strokes (tirakita = 4 tabla strokes)

- A Csound synthesis instruction with its parameters (frequency, amplitude, envelope…)

- A zero-duration object (out-time object) that sends all its messages simultaneously — useful for triggering a chord or a punctual event

- An input object that waits for an external signal (MIDI note, mouse click) to synchronize real-time performance

- A simple temporal interval in smooth time mode, serving as a duration marker (the time patterns

t1,t2… of B12)

The analogy proposed by the creators of BP2 is striking: a traditional sequencer is like a pixel image — each event is defined individually, point by point. BP3 is like a vector image — objects are abstract entities whose temporal form is calculated dynamically according to context. The same tirakita object will be played differently at 60 BPM and at 120 BPM, not because the composer reprogrammed each stroke, but because the object’s temporal properties dictate how it adapts.

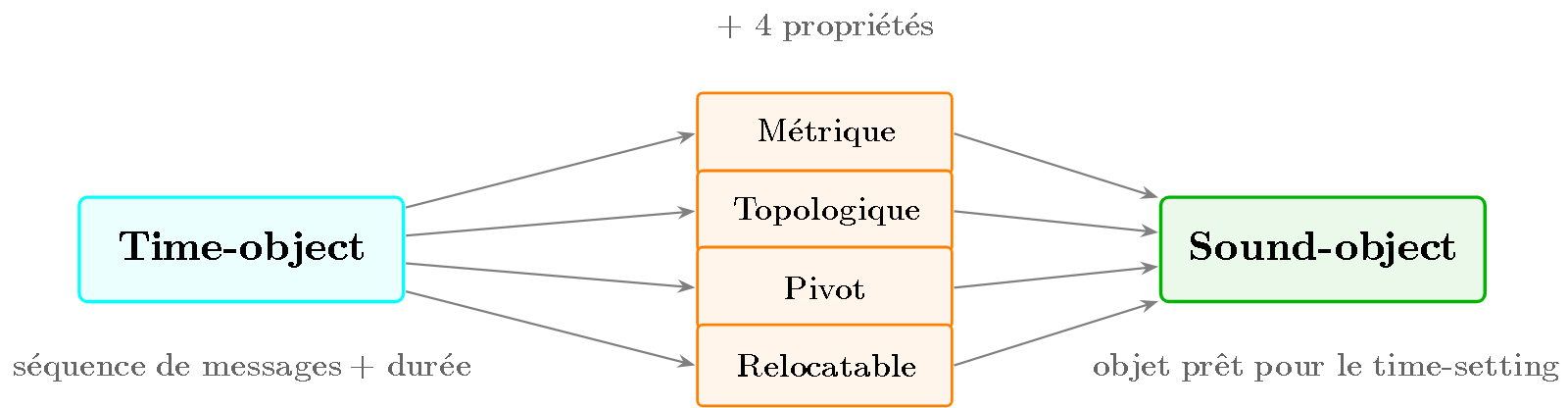

2. From time-object to sound-object: the four properties

A bare time-object is a sequence of messages with a duration. But to participate in a realistic musical performance, it needs properties that govern its temporal behavior. When a time-object is enriched with these properties, it becomes a sound-object.

Bernard Bel defines four fundamental properties:

Metric properties — How the object’s internal timing adjusts to the tempo. Do the four strokes of tirakita maintain equal spacing when the tempo changes? Or are some strokes “elastic” and others “rigid”? The metric property encodes this information.

Topological properties — How the object interacts with its neighbors. Can it be truncated at the beginning if the previous object encroaches? Can it overlap the next object? These properties govern phrasing: how objects connect, overlap, or separate — exactly like phonemes in speech change in contact with their neighbors (the phenomenon of coarticulation).

The pivot — A temporal point within the object that anchors to a beat or a time streak. The pivot is not necessarily the beginning of the object: in tirakita, it might be the third stroke that coincides with the beat, not the first.

Relocatable — Can the object be moved freely in time, or is it anchored to a fixed position?

Figure 1 — From time-object to sound-object. A time-object (sequence of messages with a duration) is enriched with four properties (metric, topological, pivot, relocatable) to become a sound-object ready to be placed in time by the time-setting algorithm.

3. Time-setting: placing objects in time

Time-setting is the central mechanism that distinguishes BP3 from a simple sequencer. It is a constraint satisfaction algorithm that takes a structure of sound-objects (produced by the grammar) and calculates their placement in physical time.

The algorithm simultaneously solves several types of constraints:

- Truncate the beginning of an object if the previous object encroaches (topological property)

- Adjust the internal tempo of each object according to context (metric property)

- Move objects backward in time to respect precedence relationships

Bernard Bel compares this process to coarticulation in speech. When you pronounce “papa”, the second “p” is not identical to the first — its realization is modified by adjacent sounds. Similarly, a tirakita played after a silence is not identical to a tirakita played after a dha — time-setting adjusts the phrasing according to context.

What is remarkable is that the composer does not need to program these adjustments. He defines the object properties once, and the time-setting algorithm calculates the phrasing automatically for each context. The polymetric representation (see B5) thus makes it possible to produce natural phrasing without resorting to randomization instructions — this is, according to Bernard Bel, “a major discovery for computer music”.

4. Csound: the first non-MIDI output

If the grammar’s terminals are time-objects and not notes, nothing obliges these objects to be MIDI messages. This is exactly what Bernard Bel explored with the Csound output.

Csound, created by Barry Vercoe at MIT, is a sound synthesis language of considerable power: it allows defining any synthesis process (subtractive, additive, FM, granular, physical…) in the form of instruments (.orc files) driven by scores (.sco files).

In BP3, a time-object can be a Csound instruction instead of a NoteOn/NoteOff pair. The _ins(x) procedure assigns a Csound instrument to the generated objects. The grammar terminal is no longer “play a C4” but “execute instrument 3 with parameters frequency=440, amplitude=0.8, attack=0.01”.

This change is conceptually profound. With MIDI, terminals live in a discrete and limited space: 128 pitches, 128 velocities, 16 channels. With Csound, terminals live in a continuous and unlimited space: any synthesis process parameterized by real values. The grammar remains the same — only the nature of the objects it manipulates changes.

Bernard Bel envisioned going further: real-time Csound output and automatic extraction of instruments from existing Csound scores. The BP2SC project (B7) continues this logic with SuperCollider — SynthDefs replacing Csound instruments.

5. Granular synthesis: grains as time-objects

One of the most visionary applications of time-objects comes from composer Otto Laske, who worked with granular synthesis in the 1990s.

Granular synthesis, theorized by Iannis Xenakis (1971) and developed by Curtis Roads (Microsound, 2001), builds sound from grains — micro-fragments of sound from 1 to 100 milliseconds. A single grain is imperceptible as a musical event; but thousands of grains, organized into clouds and textures, produce sounds of extraordinary richness.

Laske’s idea was immediate: if a grain is a time-object, then a BP3 grammar can organize grains. One could write:

S --> {texture_dense, drone_grave}

texture_dense --> grain_aigu grain_aigu grain_moyen grain_aigu

drone_grave --> grain_long grain_long

The polymetry of B5 would apply naturally: {texture_dense, drone_grave} would superimpose a fast texture of high-pitched grains with a slow drone of low-pitched grains — both compressed into the same duration.

In 1990, this was beyond the technical capabilities of machines. The computation time required to generate and place thousands of grains per second was prohibitive. But in 2026, the available computing power makes this vision perfectly feasible. A 10 ms grain is a time-object like any other — with its metric properties (fixed or elastic internal duration), its pivot (the amplitude peak), and its topological properties (can it overlap the next grain?).

Grammar-organized granular synthesis would open the door to formal multi-scale composition: the same grammar would structure musical phrases (seconds), granular textures (milliseconds), and global form (minutes) — three temporal levels unified by a single formalism.

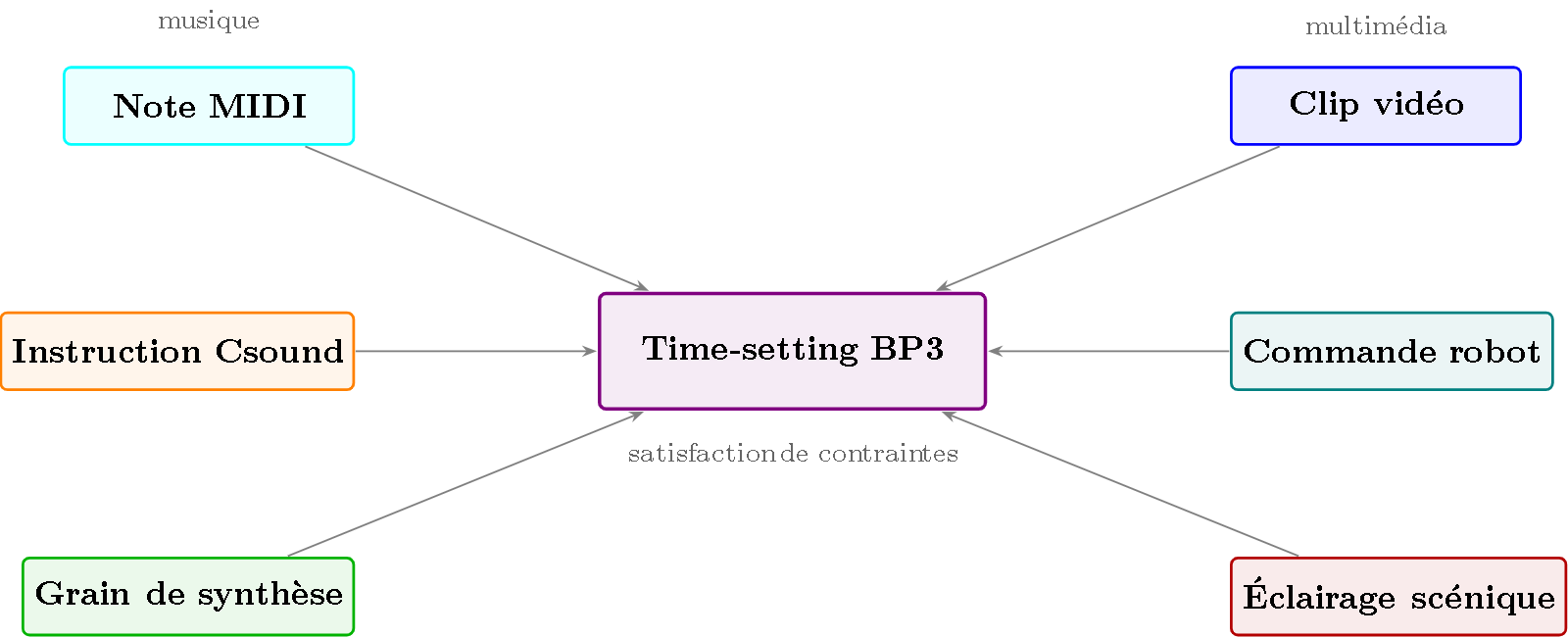

6. Beyond sound: video, robotics, multimedia

If a time-object can be a MIDI note, a Csound instruction, or a synthesis grain, it can also be… anything else that unfolds in time.

Bernard Bel explicitly states: the time-object model “could easily be applied to other types of events such as the scheduling, sizing and precise timing of video clips, robot commands, and so on”.

Let’s take a few examples:

Grammar-based video editing. A video clip is a time-object: it has a duration, a possible cut point (pivot), and transition properties with adjacent clips (fade, cut, wipe). A grammar could generate editing sequences:

S --> intro {développement, commentaire_voix_off} conclusion

intro --> plan_large plan_moyen gros_plan

Polymetry would superimpose tracks (image + sound + subtitles), time-setting would manage transitions, and grammar would ensure the structural coherence of the edit.

Formal choreography. A robot command (or stage lighting) is a time-object: “left arm up for 2 seconds” has a duration, overlap constraints with adjacent movements, and a synchronization point with the music. This is exactly what Bernard and Andréine Bel explored with the composition Shapes in Rhythm (1994), created for 6 Kathak dancers with music-choreography synchronization.

Interactive installations. Input objects — zero-duration time-objects that await an external signal — allow basic real-time synchronization. A motion sensor triggers an event, which triggers the rest of the grammar. The structure remains formal; interaction is integrated.

The common thread: anything that has a duration and synchronization points can be a time-object. The grammar does not need to “understand” what a video or a robot movement is — it manipulates temporal properties, not content.

Figure 2 — BP3’s time-setting as a universal engine. Whether it’s MIDI notes, Csound instructions, synthesis grains, video clips, robotic commands, or stage lighting, the mechanism is the same: the time-setting algorithm places objects in time while respecting their metric and topological properties.

Key takeaways

- A time-object is the elementary building block of BP3: a sequence of messages with a duration. Enriched with four properties (metric, topological, pivot, relocatable), it becomes a sound-object.

- Time-setting is a constraint satisfaction algorithm that places sound-objects in physical time, producing natural phrasing by analogy with speech coarticulation.

- BP3 does not manipulate the objects’ content — only their temporal properties. This allows it to process MIDI notes, Csound instructions, or any event inscribed in time.

- Granular synthesis (Laske, Roads) is the most visionary application: organizing thousands of micro-sound fragments by grammar, unifying three temporal scales within a single formalism.

- The generalization is radical: anything that has a duration and synchronization points can be a time-object — video, robotics, lighting, multimedia. BP3 is not a note sequencer but a universal time-setting engine.

To go further

- Bel, B. (1992). “Time-setting of sound-objects: a constraint-satisfaction approach”. — The foundational article on time-setting.

- Bel, B. (2001). “Rationalizing Musical Time: a constraint-satisfaction approach”. — The complete theoretical framework.

- Bel, B. (2005). “Two algorithms for the instantiation of structures of musical objects”. HAL halshs-00004504. — The time-setting algorithms.

- Roads, C. (2001). Microsound. MIT Press. — The reference on granular synthesis and temporal scales.

- Xenakis, I. (1971). Formalized Music. Indiana University Press. — The foundations of granular synthesis and stochastic music.

- Dannenberg, R. (1993). “Music representation issues, techniques, and systems”. Computer Music Journal, 17(3). — Overview of musical representations.

- BP3 Documentation : Time patterns & smooth time — Smooth time in detail.

- BP3 Documentation : Shapes in Rhythm — Choreographic composition with time-objects.

Glossary

- Coarticulation: In phonetics, the modification of a sound by its adjacent sounds. By analogy, BP3’s time-setting modifies the phrasing of a sound-object based on its temporal context.

- Csound: Sound synthesis language created by Barry Vercoe (MIT, 1986). BP3 can generate Csound code as an alternative to MIDI, allowing arbitrarily complex grammar terminals.

- Grain: In granular synthesis, a micro-sound fragment (typically 1-100 ms) which, combined with thousands of others, forms complex textures and sounds.

- Input object: A zero-duration time-object that waits for an external signal (MIDI note, mouse click) to synchronize real-time performance.

- Microsound: Curtis Roads’ term for the temporal scale of grains (below the note, above the sample).

- Out-time object: A zero-duration time-object whose messages are all sent simultaneously — useful for chords or punctual events.

- Metric properties: Characteristics of a sound-object related to its internal duration and how its timing adjusts to tempo.

- Topological properties: Characteristics of a sound-object related to its neighborhood relationships (truncation, overlap, adjacency).

- Sound-object: A time-object enriched with metric and topological properties, ready to be placed in time by the time-setting algorithm.

- Time-object: The elementary building block of BP3 — any sequence of messages possessing a duration. The generic term includes notes, Csound instructions, and any temporal event.

- Time-setting: A constraint satisfaction algorithm that calculates the physical placement of sound-objects in time, taking into account their properties and context.

Links

Prerequisites: B5, B7

Reading time: 12 min

Tags: #time-objects #BP3 #sound-object #Csound #granular-synthesis #multimedia #algorithmic-composition

Next article: B10 — Formal BP3 grammar