M7) The Landscape of Formal Music Languages

From MUSIC V to TidalCycles — where does BP3 fit in?

Over twenty languages, seven decades, one goal: to give music a formal language. A guided tour of a vast, fragmented landscape — and a niche that only BP3 occupies.

Where does this article fit in?

In M3, we mapped out the six levels of musical abstraction — from raw signal (level 1) to functional pattern (level 6). In I3, we introduced SuperCollider, the Swiss Army knife that spans these levels. But SuperCollider is not alone. The landscape of formal music languages is vast and heterogeneous, and no single article fully maps it.

This article aims to explore this landscape, position each language on the M3 map, and understand why BP3 occupies a territory no one else inhabits. This article sets the stage for M11 (coming soon), which will delve deeper into the lineage between BP3 and TidalCycles.

Why is this important?

Choosing a musical language means choosing what one can think musically. A composer in Csound thinks in signals. A live-coder in TidalCycles thinks in cyclical patterns. A BP3 user thinks in grammars and derivations. Language does not transcribe musical thought — it shapes it.

The problem: the landscape is vast but fragmented. Each community knows its own tool, but bridges between them are rare. Comparing Faust and TidalCycles is a bit like comparing a microscope and a telescope — they are both optical instruments, but they don’t look in the same direction.

The idea in one sentence

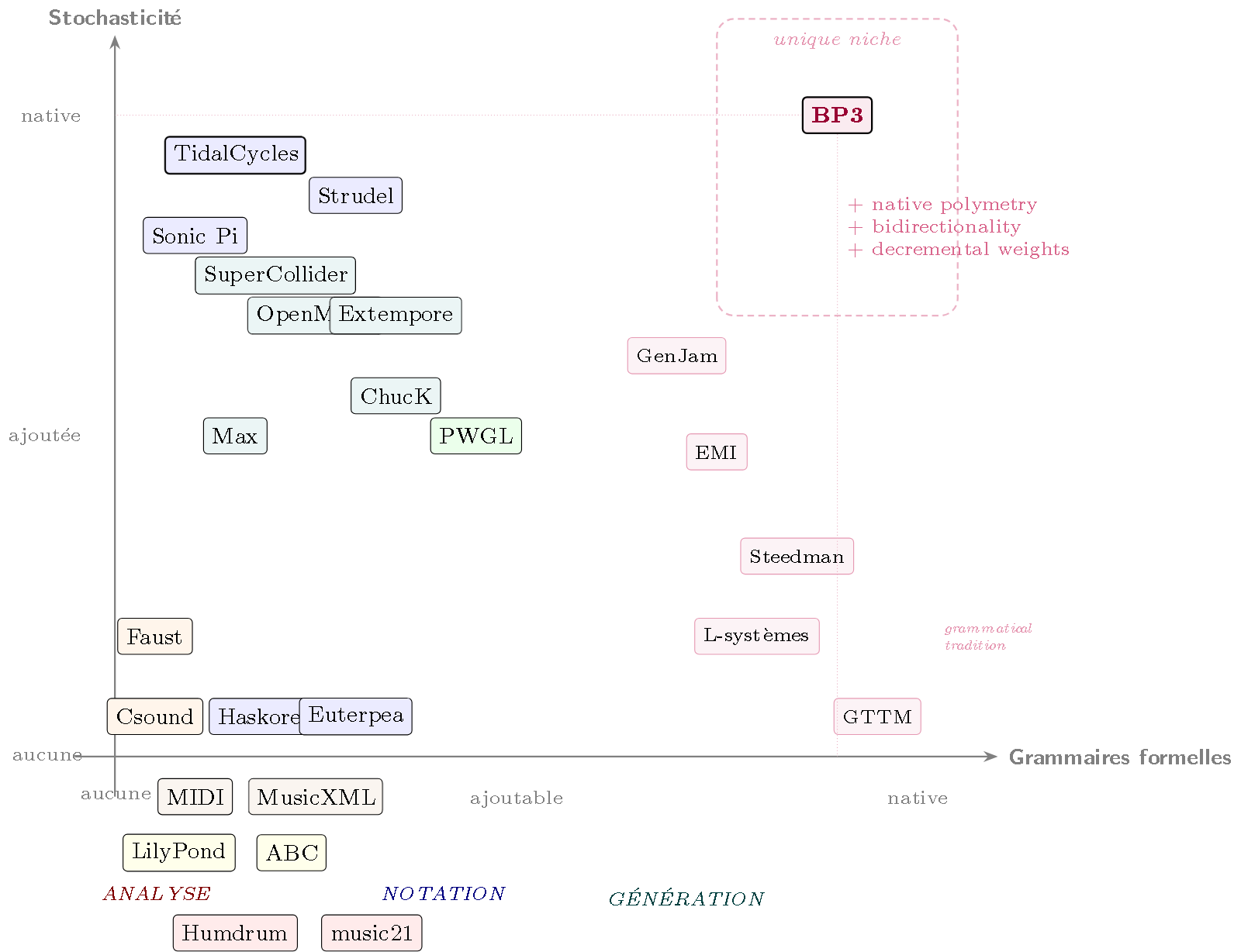

Musical DSLs embody compromises between three axes — structural expressivity, accessibility, real-time performance — and BP3 is the only one to combine formal grammars, native stochasticity, and polymetry.

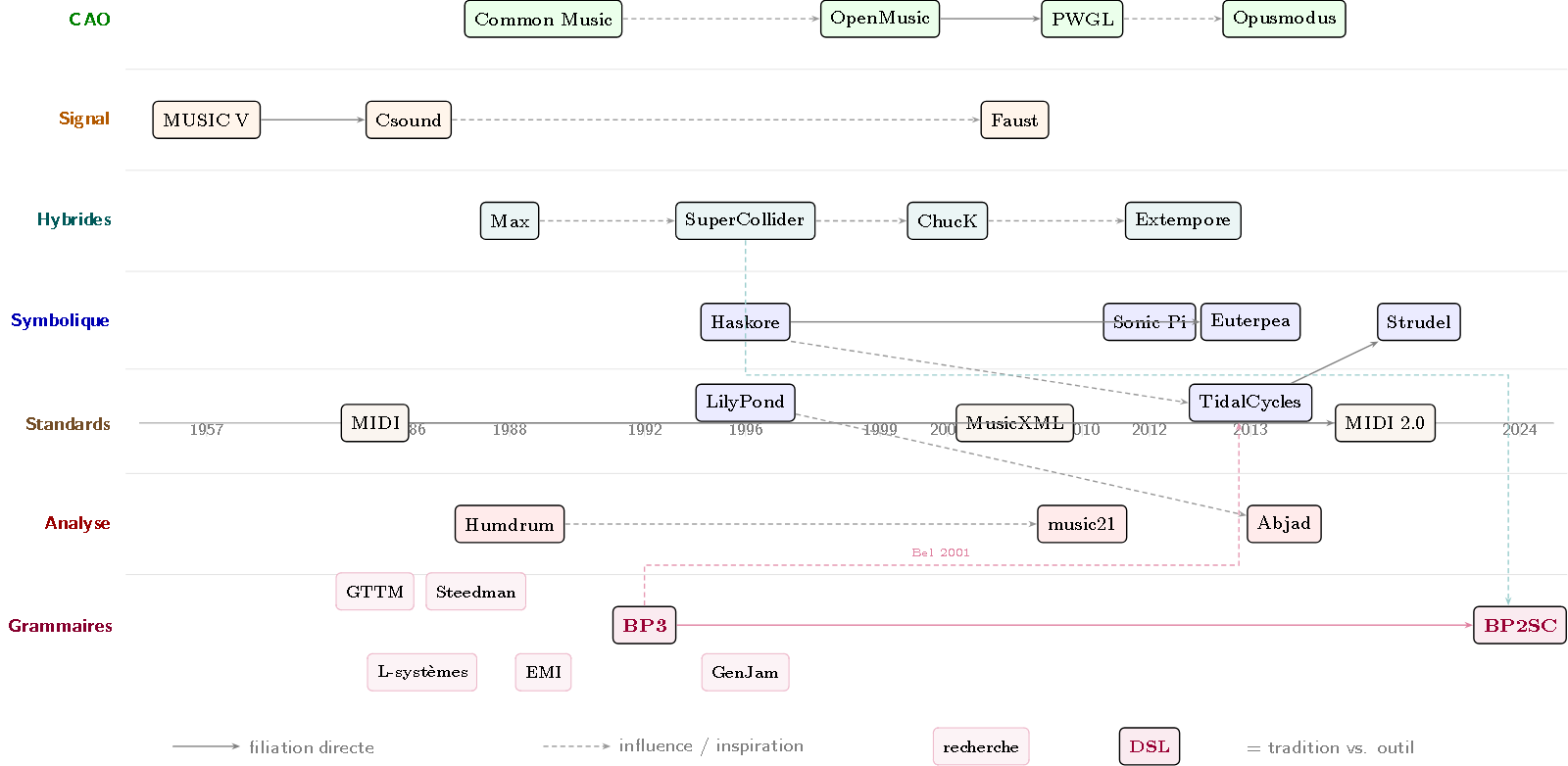

Genealogy: from MUSIC V to today

It all began in 1957, when Max Mathews wrote MUSIC at Bell Labs — the first program to generate sound by computer. From this common trunk, several parallel lineages emerged:

- The signal lineage: MUSIC V (1957) → Csound (1986) → Faust (2004). The basic unit is the audio sample. One thinks in oscillators, filters, envelopes.

- The symbolic lineage: Haskore (1996) → Euterpea (2013) → TidalCycles (2013) → Strudel (2022). The basic unit is the note or pattern. One thinks in structures, transformations, compositions.

- The algorithmic composition (AC) lineage: Common Music (1989) → OpenMusic (1999) → PWGL (2002) → Opusmodus (2015). Computer-assisted composition, born at Stanford and IRCAM, where compositional processes are programmed in Lisp.

- The analysis lineage: Humdrum (1988) → music21 (2010). The basic unit is the corpus — music is not generated, it is studied.

- The notation lineage: LilyPond (1996) → Abjad (2008). The “LaTeX of music” — the score is described, the compiler engraves it.

Between these lineages, hybrid systems cross layers: Max/MSP (1988), SuperCollider (1996), ChucK (2003), Extempore (2011). And beneath all this, a protocol has connected almost all systems since 1983: MIDI — the lingua franca of digital music.

And then there’s BP3 (1992) — which doesn’t fit into any of these lineages. Its basic unit is neither the signal nor the pattern, but the rewrite rule. It is this singularity that the rest of the article will illuminate.

Figure 1 — Genealogy of formal music languages. Seven lineages: AC (green), signal (orange), hybrids (turquoise), symbolic (blue), standards (brown), analysis (red), grammars (purple). The grammar lineage distinguishes the research tradition (GTTM, Steedman, L-systems, EMI, GenJam — thin nodes) from the operational DSL (BP3/BP2SC — thick nodes). The BP3 → TidalCycles connection (via [Bel2001]) crosses lineages.

Guided tour

Csound (Vercoe, 1986) — the veteran

Csound is the direct descendant of MUSIC V. Created by Barry Vercoe at the MIT Media Lab, it separates the description of sound (orchestra — the instruments) from the description of music (score — the notes). This “orchestra/score” paradigm dominated synthesis for 30 years.

instr 1

aOut poscil 0.5, 440

outs aOut, aOut

endin

i 1 0 2 ; plays instrument 1 at t=0 for 2 seconds

M3 Layer: Signal (level 1). Csound operates at the lowest level — it directly manipulates the waveform. Community: still active after 40 years, with thousands of composers and researchers. Limitation: no concept of “musical structure” — patterns and hierarchies do not exist in the language.

Max (Puckette, 1988, IRCAM) — visual dataflow

Created by Miller Puckette at IRCAM, Max is the first visual programming environment for music. Boxes are connected by cables — sound flows like water through pipes.

[mtof] → [osc~ ] → [*~ 0.5] → [dac~]

M3 Layer: Dataflow (level 2). Max routes audio streams and control messages in a graph. Historical note: Max became commercial (Cycling ’74, now Ableton). Miller Puckette created Pure Data (Pd) as a free alternative, with the same philosophy. Limitation: polymetry and stochasticity exist, but must be manually wired — nothing is native.

OpenMusic (IRCAM, 1999) — assisted composition

OpenMusic is a computer-assisted composition (CAC) environment, stemming from the Lisp tradition at IRCAM (PatchWork, then OpenMusic). It is a visual system like Max, but oriented towards the score rather than the signal: musical objects (chords, sequences, rhythms) are manipulated via graphical programs.

;; Sequence of 3 notes in OpenMusic

(make-instance 'chord-seq

:lmidic '(6000 6400 6700) ; C-E-G in midicents

:ldur '(500 500 500)) ; durations in ms

M3 Layer: Generative (level 5), but notation-oriented. OpenMusic produces scores, not sound directly. It excels in constraint-based composition — defining rules (allowed intervals, harmonic densities) and letting the system search for solutions. Connection: stems from the tradition of Pierre Boulez and spectral music at IRCAM. Limitation: no real-time — it’s a studio tool, not a performance tool.

Common Music (Taube, 1989) — the algorithmic ancestor

Common Music is one of the oldest algorithmic composition environments, created by Heinrich Taube at Stanford (CCRMA). Written in Common Lisp, contemporary with BP3, it defined the vocabulary of CAC before OpenMusic took over.

;; Common Music: random sequence of 12 notes

(process repeat 12

output (new midi :keynum (between 60 84)

:duration (pick .25 .5)))

M3 Layer: Generative (level 5). Common Music offers an integrated scheduler, compositional processes (loops, conditions, randomness), and MIDI/audio outputs. His book Notes from the Metalevel (Taube, 2004) remains a reference in algorithmic composition. Strength: trained an entire generation of composers (1990s–2000s). Limitation: reduced community today, superseded by SuperCollider and OpenMusic. But its influence persists — it is the common ancestor of the entire CAC lineage.

SuperCollider (McCartney, 1996) — the Swiss Army knife

Already presented in detail in I3. Here, let’s focus on what makes it unique in the landscape: its Pattern system.

Pbind(

\note, Pseq([0, 4, 7, 12], inf),

\dur, 0.25,

\amp, Prand([0.5, 0.8, 1.0], inf)

).play;

SuperCollider’s Patterns (Pbind, Pseq, Prand, Pwrand…) constitute a language in themselves — a DSL (Domain-Specific Language) within SuperCollider. They allow sequencing events with random choices, repetitions, and alternations.

M3 Layer: spans levels 1 to 6 (signal + events + patterns). This is its strength — and its complexity. Stochasticity is available (Prand, Pwrand), but polymetry remains partial: independent temporal streams must be manually synchronized.

ChucK (Wang & Cook, 2003) — time as a type

ChucK is the only music language where time is a native type of the language. Created by Ge Wang and Perry Cook at Princeton, it introduces the concept of strongly-timed programming: time is not a callback or a sleep — it’s a value manipulated like an integer or a string.

SinOsc s => dac;

440 => s.freq;

1::second => now; // advances time by 1 second

The => operator (the ChucK operator) controls both data flow and temporal flow. 1::second => now does not “sleep” — it explicitly advances the temporal cursor. This semantic allows creating concurrent flows (shreds) with precise sample-accurate temporal control.

M3 Layer: Hybrid (levels 1–3). ChucK operates at the signal level with integrated synthesis, but with unparalleled event-based and temporal control. Strength: live coding, education (used at Stanford, Princeton), deterministic concurrency via shreds. Limitation: no TidalCycles-like patterns, no grammars — time is precise but structures remain flat.

Haskore / Euterpea (Hudak, 1996/2013) — musical algebra

Paul Hudak (Yale) asked the question: can music be represented as an algebraic type in Haskell? His answer, Haskore (1996), then its successor Euterpea (2013), define music as a recursive type:

-- Euterpea: a C major arpeggio

line [c 4 qn, e 4 qn, g 4 qn]

Music is a tree of combinators: line (sequence), chord (chord), Modify (transformation). Transformations are composed like mathematical functions: transposition, inversion, mirroring.

M3 Layer: Functional/Pattern (level 6). The approach is purely algebraic — no synthesis, no real-time. Sound is produced by MIDI export. Strength: formal rigor is maximal — each operation has a precise semantic in Haskell’s type system. Limitation: no native polymetry, no stochasticity, no live performance.

Faust (Orlarey/GRAME, 2004) — pure DSP

Faust (Functional AUdio STream) is a special case: a pure functional language dedicated exclusively to signal processing. Created by Yann Orlarey at GRAME (Lyon), it compiles to C++, LLVM, WebAssembly, Rust — and generates VST, AU, CLAP plugins.

import("stdfaust.lib");

process = os.osc(440) * 0.5;

M3 Layer: Signal (level 1) — and only the signal. Faust has no concept of “note”, “rhythm”, or “sequence”. It is a processing language, not a composition language. Strength: it is one of the few musical DSLs with a published formal semantic — every Faust program denotes a function $f : \mathbb{R}^n \to \mathbb{R}^m$. Limitation: N/A for polymetry, stochasticity, or grammars — this is not its domain.

TidalCycles (McLean, 2013) — cyclical patterns

TidalCycles (or Tidal) is an embedded DSL in Haskell, created by Alex McLean (Goldsmiths, University of London). It represents music as patterns — functions from time to events — manipulated by functional transformations.

d1 $ sound "bd [sn cp] hh*3"

d2 $ note "{0 3 7, 0 5}%4" # s "superpiano"

M3 Layer: Functional/Pattern (level 6). The basic unit is the cycle — a fixed duration that patterns recursively divide. The mini-notation (the syntax in quotes) allows expressing polymetry ({3 elements, 2 elements}) and stochasticity (?) in a few characters.

Lineage with Bel: McLean cites [Bel2001] “Rationalizing Musical Time” in 8+ publications (2007–2022). The cyclic representation of time, temporal ratios, polymetry as a superposition of cycles — all concepts that conceptually descend from the Bol Processor. This lineage will be explored in detail in M11 (coming soon).

Strength: Tidal is the flagship language of the Algorave movement — musical performances where code is projected onto a screen. With ~200+ cumulative citations and ~1800 GitHub stars, it is the most influential live coding DSL. Limitation: no hierarchical structure — patterns are flat, without a derivation tree.

Sonic Pi (Aaron, Cambridge, 2012) — education

Sonic Pi is a Ruby-based live coding environment, developed by Sam Aaron at the University of Cambridge in collaboration with the Raspberry Pi Foundation. Its goal: to make musical programming accessible to everyone, including children.

live_loop :beat do

sample :bd_haus

sleep 0.5

end

M3 Layer: between level 3 (event-based — sample, play, sleep) and level 6 (simple patterns via live_loop). Strength: single installer, integrated interface, 140+ samples, exemplary documentation, school curriculum. ~2 million downloads. Limitation: less expressive than TidalCycles for complex patterns ��� no native polymetry, no mini-notation.

Extempore (Sorensen, 2011) — the live compiler

Extempore pushes live coding to its extreme: it is the only system that compiles DSP in real-time. Created by Andrew Sorensen, it embeds two languages — Scheme for high-level sequencing, and xtlang for low-level signal processing — with JIT (LLVM) compilation during performance.

;; Extempore: FM synthesis recompilable live

(bind-func dsp:DSP

(lambda (in time chan dat)

(* 0.3 (sin (* TWOPI 440.0

(/ (i64tof time) SR))))))

Where Sonic Pi or TidalCycles send messages to an external audio engine, Extempore modifies the synthesis function itself while it plays. An oscillator can be rewritten in concert — the transition is instantaneous, without interruption.

To understand what this means, one must distinguish three levels of real-time control in musical systems:

| Level | What changes | What remains fixed | Examples |

|---|---|---|---|

| 1. Parameters | Frequency, cutoff, amplitude… | Synth topology | Hardware (potentiometers), SuperCollider .set, MIDI CC |

| 2. Patterns | Sequencing, notes, rhythms | Topology + audio engine | SuperCollider Patterns, TidalCycles, Sonic Pi |

| 3. Topology | The DSP graph itself | Nothing — everything is recompilable | Extempore (unique in software) |

Level 1 is the classic synthesizer model: a Moog has a fixed circuit (VCO → VCF → VCA), but potentiometers continuously modulate everything. SuperCollider SynthDefs work exactly like this — arguments are the potentiometers, the graph is fixed at compilation. Level 2 is that of usual live coding — what is played changes, not how the sound is produced. Level 3, that of Extempore, is radically different: the synthesis function is recompiled while it is running.

In hardware, modular synthesizers (Eurorack, Buchla) approach level 3 — the topology is physically rewired. But rewiring is discrete (plugging/unplugging), not continuous and instantaneous like Extempore’s JIT recompilation. This uniqueness justifies its place in the landscape, even if 99% of live coding happens at levels 1 and 2.

M3 Layer: Signal + Generative (levels 1–5). Extempore is the only software system to operate at control level 3 — free topology recompilable live. Strength: no gap between composition and synthesis. Limitation: steep learning curve (xtlang resembles typed C), restricted community.

Strudel (McLean et al., 2022) — Tidal in the browser

Strudel is the JavaScript port of TidalCycles, designed to run directly in a web browser — zero installation, same semantics.

M3 Layer: identical to TidalCycles (level 6). Strudel inherits mini-notation, native polymetry, stochasticity. It bridges the gap between the accessibility of Sonic Pi (nothing to install) and the expressivity of TidalCycles (composable patterns). This is a sign that McLean’s cyclical pattern model — itself inspired by Bel — has become a reference paradigm.

Programmable notation

LilyPond (Nienhuys & Nieuwenhuizen, 1996) — the LaTeX of music

LilyPond is to the musical world what LaTeX is to the academic world: a description language that compiles to a professional-quality score. Created by Han-Wen Nienhuys and Jan Nieuwenhuizen, it uses a readable text format that the compiler transforms into typographic engraving.

\relative c' {

\time 3/4

c4 e g | c2. |

\key g \major

b4 d g | b2.

}

M3 Layer: Notational (level 4). LilyPond does not generate music and does not produce sound — it engraves scores. But its text format makes it a formal language in its own right, with its own grammar (parsed by GNU Bison). Strength: unparalleled engraving quality, de facto standard for composers who program, basis for Abjad (Python API). Limitation: no synthesis, no interaction — it’s a publication tool, not a creation tool.

Analysis tools

music21 (Cuthbert & Ariza, 2010) — computational analysis

music21 is an anomaly in the landscape: it is the only major system oriented towards analysis rather than generation. Created by Michael Cuthbert at MIT, it is a Python toolkit for computational musicology — it is used to study corpora, not to compose.

# music21

## Analyze a Bach fugue

from music21 import *

bach = corpus.parse('bwv66.6')

key = bach.analyze('key') # B minor

bach.measures(1, 4).show() # displays the first 4 measures

The contrast with the rest of the landscape is striking: where all other systems produce music, music21 dissects it. It is the tool of choice for computational musicology — harmonic analysis, melodic pattern detection, statistics on corpora of thousands of works.

M3 Layer: Analytical. music21 operates on symbolic representations (scores) but in recognition mode, not generation. Strength: integrated corpus (Bach, Beethoven, folk), harmonic, melodic, rhythmic analysis tools, interface with LilyPond and MIDI. Over 2500 citations. Limitation: no synthesis, no real-time.

Exchange standards

None of the preceding languages exist in a vacuum. Three standards constitute the common infrastructure of the landscape — the protocols and formats that almost all systems speak or consume.

MIDI (Smith & Kakehashi, 1983) — the universal protocol

MIDI (Musical Instrument Digital Communication) is not a language but a protocol — a standard for transmitting musical events (note on/off, velocity, controllers) between instruments and software. It is the connective tissue of the entire ecosystem: Csound, SuperCollider, BP3, TidalCycles, music21 — all speak MIDI.

Its limitations are known: 7-bit resolution (128 velocity values), no concept of “phrase” or “chord”, no notation. But its universality is unmatched after 40+ years — it is the only standard that all systems in the table support, directly or indirectly.

MIDI 2.0 (2020) corrects technical limitations after 37 years: 32-bit resolution, per-note articulation, property exchange, full backward compatibility. The update resolves resolution issues but does not change the fundamental level of abstraction — MIDI 2.0 remains an event protocol, not a structural language.

MusicXML (Good, 2004) — the PDF of the score

MusicXML is to the world of notation what PDF is to text: a standardized exchange format for scores. Created by Michael Good (Recordare), now maintained by the W3C Music Notation Community Group, it allows transferring scores between Finale, Sibelius, MuseScore, LilyPond — and accessing them from music21 or Humdrum.

MusicXML encodes the visual score (staves, measures, notes, dynamics) in XML. It is a description format, not a generative language — but it has become the de facto standard for interoperability of digital scores.

MIDI vs. MusicXML: MIDI encodes the performance (when to press which key, at what velocity). MusicXML encodes the score (which note, in which measure, with what notation). These are two complementary layers — one event-based (M3 level 3), the other notational (M3 level 4). Neither encodes musical structure (M3 level 5) — this is where generative DSLs and BP3 come in.

Other notable systems

The landscape is not limited to these profiles. Five systems deserve a mention for their influence or originality.

PWGL / Opusmodus — Successors of OpenMusic in the CAC lineage. PWGL (PatchWork Graphics Library), created by Mikael Laurson at the Sibelius Academy (Helsinki, 2002), extended OpenMusic’s visual paradigm with a more powerful constraint editor and audio integration. Opusmodus (2015) continues this lineage with a modernized Lisp interface and composer-oriented documentation. M3 Layer: Generative (level 5).

Alda — Ultra-accessible text-based language created by Dave Yarwood (2015). Its minimalist syntax (piano: c d e f g a b > c) allows writing music as plain text. With 5700 GitHub stars, it is one of the most popular musical DSLs — but it has no academic publications. The “Markdown of music”: perfect for quick sketches, limited for research.

Humdrum/\\kern — David Huron created in 1988 a Unix toolkit for musical analysis: each tool does one thing (extract voices, count intervals, search for patterns), and they are piped together. The \\kern format encodes the score as a table of temporal columns (spines). A precursor to music21, Humdrum is still used in computational musicology for its modular philosophy and vast encoded corpus.

ABC notation — The most compact format for encoding music. |:GABc dedB| encodes a complete melodic phrase. Created by Chris Walshaw (1991), it is the de facto standard for digitized folk tradition — thousands of Irish, Scottish, and Breton tunes encoded in a few characters. Not a programming language, but a format of remarkable elegance.

Abjad — Python API for LilyPond, created by Trevor Bača and Josiah Wolf Oberholtzer (2008). Abjad allows contemporary composers to generate complex scores programmatically — loops, algorithms, constraints in Python, rendered in LilyPond. It is the bridge between algorithmic composition and engraving.

Grammar systems — the forgotten tradition

BP3 is not an isolated UFO. It is part of a tradition of systems that use formal grammars to model music — a tradition often ignored by musical DSL communities.

GTTM (Generative Theory of Tonal Music, Lerdahl & Jackendoff, 1983) — The foundational theoretical framework. GTTM proposes a generative grammar of tonal music, with well-formedness and preference rules for metric, grouping, temporal reduction, and prolongation structures. It is not software but an analytical formalism — direction ANAL, not GEN. Its theoretical influence is immense, but no system implements GTTM in a complete and usable way.

Steedman (1984, 1996) — Mark Steedman showed that jazz chord sequences can be described by context-free grammars. His work connects formal linguistics (combinatory categorial grammars) to harmonic analysis. Direction ANAL — these grammars analyze existing chord charts but do not generate performable music.

EMI / Experiments in Musical Intelligence (Cope, ~1987) — David Cope created a system that simulates compositional styles via augmented transition networks and a database of signatures. EMI produced “Bach-like” or “Chopin-like” works that fooled experts. Direction GEN — but the formalism is closer to Markov chains than to grammars in the strict sense.

GenJam (Biles, 1994) — A jazz improvisation system that combines genetic algorithms and grammars. GenJam evolves musical phrases through selection and mutation, guided by grammatical rules. It is one of the first systems to marry grammars and stochasticity — but via evolution, not via decremental weights like BP3.

Musical L-systems — L-systems (Lindenmayer, 1968), originally designed to model plant growth, have been adapted to music by several researchers (Prusinkiewicz, Worth & Stepney, McCormack). The principle: parallel rewrite rules generate self-similar structures — musical fractals. Several implementations exist (in Max, SuperCollider, Python), but none has become a standalone DSL.

BP3 distinguishes itself from all these systems by three features: it is the only one to be a usable DSL (not a theoretical framework), the only one to combine grammars and native stochasticity (decremental weights, not genetic algorithms), and the only one to work in both directions (GEN+ANAL). Musical grammar systems are either analytical (GTTM, Steedman) or generative (EMI, GenJam, L-systems) — never both.

And many others… The landscape also includes Overtone (Clojure, ancestor of Sonic Pi, 5800+ stars), FoxDot/Renardo (Python live coding), ixi lang (minimalist syntax grafted onto SuperCollider), Gibber (JavaScript, web precursor to Strudel), ORCA (2D spatial sequencer, 4900 stars), and Glicol (Rust + WebAssembly, the most recent). The table below integrates them all.

Comparative table

Here are the 24 systems, positioned according to discriminating criteria. Three columns deserve an explanation:

- Formalism: the underlying formal model of the language — grammar, algebra, protocol, schema, etc. Replaces the old “Paradigm” and “Grammars” columns with a single, richer piece of information.

- Stochasticity: native = integrated into the language’s paradigm (BP3’s decremental weights, TidalCycles’

?); integrated = available via language functions/objects (SC’sPrand, Sonic Pi’s.choose); manual = requires explicit wiring. - Direction: GEN = produces music; ANAL = analyzes existing music; DESC = describes/encodes (notation, protocol); GEN+ANAL = both.

| Language | Year | Formalism | M3 Layer | Polymetry | Stochasticity | Direction | Real-time |

|---|---|---|---|---|---|---|---|

| MIDI | 1983 | binary protocol | 3-Event-based | — | — | GEN | yes |

| Csound | 1986 | orchestra/score | 1-Signal | — | — | GEN | yes |

| Humdrum | 1988 | tabular spines (Unix) | Analysis | — | — | ANAL | — |

| Max | 1988 | dataflow graph | 2-Dataflow | manual | manual | GEN | yes |

| Common Music | 1989 | Lisp processes | 5-Generative | — | integrated | GEN | — |

| ABC | 1991 | textual notation | 4-Notational | — | — | DESC | — |

| BP3 | 1992 | Type 2+ grammars (MCSL) | 5-Generative | native | native | GEN+ANAL | via BP2SC |

| Haskore | 1996 | Music algebra (Haskell) | 6-Functional | — | — | GEN | — |

| LilyPond | 1996 | CFG (GNU Bison) | 4-Notational | — | — | DESC | — |

| SuperCollider | 1996 | OOP + Pattern DSL | 1→6 | partial | integrated | GEN | yes |

| OpenMusic | 1999 | visual patches (Lisp) | 5-Generative | manual | integrated | GEN | — |

| PWGL | 2002 | visual patches (Lisp) | 5-Generative | manual | integrated | GEN | — |

| ChucK | 2003 | strongly-timed concurrent | 1–3 | — | integrated | GEN | yes |

| MusicXML | 2004 | XML Schema (W3C) | 4-Notational | — | — | DESC | — |

| Faust | 2004 | denotational sem. | 1-Signal | N/A | — | GEN | yes |

| Abjad | 2008 | Python API / LilyPond | 4-5 | — | integrated | GEN | — |

| music21 | 2010 | Python API (toolkit) | Analysis | — | — | ANAL | — |

| Extempore | 2011 | Scheme + xtlang (LLVM) | 1–5 | — | integrated | GEN | yes |

| Sonic Pi | 2012 | imperative DSL (Ruby) | 3–6 | manual | integrated | GEN | yes |

| Euterpea | 2013 | Music algebra (Haskell) | 6-Functional | — | — | GEN | — |

| TidalCycles | 2013 | pattern algebra (Haskell) | 6-Functional | native | native | GEN | yes |

| Alda | 2015 | textual notation | 4-Notational | — | — | DESC | — |

| Opusmodus | 2015 | Lisp DSL | 5-Generative | manual | integrated | GEN | — |

| Strudel | 2022 | pattern algebra (JS) | 6-Functional | native | native | GEN | yes |

The Formalism column shows that while each language has a formal model, only BP3 is based on formal grammars in the Chomsky sense — rewrite rules that define an entire musical language. Faust has a denotational semantic, Haskore a type algebra, TidalCycles a pattern algebra — but none use the grammatical formalism as a model of music itself. Combined with GEN+ANAL Direction, native Polymetry, and native Stochasticity, BP3 remains alone in its niche.

BP3’s niche

Why BP3 is NOT a DSL like the others

BP3 is not a DSL in the usual sense. It is not a programming language in which one writes music — it is a system of formal grammars in which one defines families of music. The difference is fundamental: a TidalCycles program produces one pattern. A BP3 grammar produces all phrases of a musical language.

And unlike music21 or Humdrum, which analyze existing music, BP3 can also verify that a sequence belongs to the language defined by the grammar. Out of 24 systems, it is the only one that reasons in both directions.

Figure 2 — Positioning of musical systems according to two axes: formal grammars (horizontal) and stochasticity (vertical). To the right, the grammatical tradition (GTTM, L-systems, Steedman, EMI, GenJam — thin purple nodes) gradually increases in stochasticity without ever reaching BP3’s niche, which combines native grammars + native stochasticity + polymetry + bidirectionality.

Three unique features

1. Formal grammars as a musical model. Music generation by grammars has existed since the 1980s — Prusinkiewicz’s L-systems, Steedman’s jazz grammars, Cope’s EMI. And several systems in the table can implement grammars: OpenMusic via constraint patches, SuperCollider via dedicated quarks, LilyPond even uses a CFG (GNU Bison) to parse its input. But in all these cases, grammar is one tool among others or an implementation detail. BP3 is the only system in the landscape where rewrite rules are the native paradigm (L1): the user writes S → A B, A → do re | mi fa, and the music derives from these rules — with an operational semantic formalizable by SOS (L6). This is the only approach where expressive power is analyzable: a BP3 grammar can be positioned in Chomsky’s hierarchy (Type 2+ / MCSL, see L9).

2. Native stochasticity. Several languages offer randomness — Prand in SuperCollider, ? in TidalCycles, .choose in Sonic Pi. But BP3 goes further with decremental weights (B4): a weight that decreases with each use, ensuring that choices are renewed without intervention. And the RND mode (B3) — weighted random choice among applicable rules — is not an addition: it is integrated into the derivation mechanism itself.

3. Native polymetry. TidalCycles and Strudel share this trait — a direct inheritance from Bel. But BP3 expresses polymetry within the grammar itself: {3, do re mi, fa sol} means two voices in a 3:2 ratio, with exact durations in rational numbers (B5). No quantization, no approximation — temporal structures are mathematically precise.

And also: bidirectionality

Beyond these three unique features, BP3 is the only musical system in the landscape to operate in three directions (B8):

- PROD (production): generate phrases from a grammar

- ANAL (analysis): verify that a sequence belongs to the defined language

- TEMP (templates): use analytical wildcards to accept families of sequences

This bidirectionality — studied in detail in L13 — has no equivalent in any of the 24 systems in the table. The contrast is now visible in the Direction column: music21 and Humdrum analyze (ANAL), all others generate (GEN), and BP3 is the only one to do both (GEN+ANAL). This is a sign that BP3 operates at a different level of abstraction: where other languages execute music, BP3 reasons about musical languages.

music21 analyzes what BP3 generates — but each ignores the other direction. An ideal system would combine music21’s corpus analysis with BP3’s grammatical generation. This complementarity confirms the diagnosis of L13: the generation/recognition asymmetry is a fundamental blind spot in the field.

The revisited triangle

In M3, we identified a triangle of compromises: exhaustiveness (encoding everything), compactness (little data), generativity (producing variations). Most languages sacrifice one axis:

- Csound and Faust maximize exhaustiveness (full signal) at the cost of generativity

- TidalCycles and Euterpea maximize generativity (infinite patterns) at the cost of exhaustiveness

- MIDI maximizes compactness at the cost of everything else

BP3 is the only one to combine compactness (a grammar of a few rules = an entire language) and generativity (stochastic choices, multiple modes) without sacrificing structural expressivity (hierarchies, polymetry, flags). And the enrichment of the landscape reinforces this observation: neither ChucK (strongly-timed but without structure), nor Extempore (compiled DSP but without grammars), nor Common Music (algorithmic but without bidirectionality) occupy this territory. The price: BP3 does not perform synthesis — that is the role of BP2SC (B7) and SuperCollider.

Key takeaways

- Six historical lineages — signal, symbolic, AC, analysis, notation, and hybrids — each with its own basic unit (sample, pattern, process, corpus, score, stream).

- The M3 framework organizes the landscape: each language operates at one or more levels of abstraction (signal, dataflow, event-based, notational, generative, functional).

- 24 systems, none cover everything — SuperCollider comes closest to all six levels (1 to 6), Extempore compiles DSP live, ChucK treats time as a native type.

- Four criteria distinguish BP3: formal grammars (unique), native stochasticity (decremental weights), native polymetry (exact ratios), and bidirectional direction (GEN+ANAL).

- TidalCycles is BP3’s closest cousin — same interest in polymetry, same inspiration (Bel2001) — but with a fundamentally different paradigm (functional patterns vs. production grammars).

- The generation/analysis asymmetry is systemic: music21 and Humdrum analyze, all others generate, and only BP3 does both. This fracture runs through the entire landscape.

- Bidirectionality is the ultimate niche: out of 24 systems, BP3 is the only one to combine grammars + stochasticity + polymetry + bidirectionality.

To go further

Bibliographical references

- Mathews, M. V. (1969). The Technology of Computer Music. MIT Press. — The foundational work (MUSIC V).

- Vercoe, B. (1986). Csound: A Manual for the Audio Processing System. MIT Media Lab.

- Puckette, M. (1991). “Combining Event and Signal Processing in the MAX Graphical Programming Environment”. Computer Music Journal, 15(3).

- McCartney, J. (2002). “Rethinking the Computer Music Language: SuperCollider”. Computer Music Journal, 26(4).

- Hudak, P. (1996). “Haskore Music Notation — An Algebra of Music”. Journal of Functional Programming, 6(3).

- Hudak, P. (2014). The Haskell School of Music — From Signals to Symphonies. Cambridge University Press.

- Assayag, G. et al. (1999). “Computer-Assisted Composition at IRCAM: From PatchWork to OpenMusic”. Computer Music Journal, 23(3).

- Orlarey, Y., Fober, D. & Letz, S. (2004). “Syntactical and Semantical Aspects of Faust”. Soft Computing, 8(9).

- McLean, A. (2014). Making Programming Languages to Dance to: Live Coding with Tidal. PhD Thesis, Goldsmiths.

- McLean, A. & Wiggins, G. (2010). “Tidal – Pattern Language for the Live Coding of Music”. In Proc. FARM.

- Aaron, S. & Blackwell, A. F. (2013). “From Sonic Pi to Overtone”. In Proc. FARM.

- Roos, F. & McLean, A. (2022). “Strudel: Live Coding Patterns on the Web”.

- Wang, G. & Cook, P. (2003). “ChucK: A Concurrent, On-the-fly Audio Programming Language”. In Proc. ICMC.

- Taube, H. (1991). “Common Music: A Music Composition Language in Common Lisp and CLOS”. Computer Music Journal, 15(2).

- Taube, H. (2004). Notes from the Metalevel: An Introduction to Computer Composition. Routledge.

- Sorensen, A. & Gardner, H. (2010). “Programming with Time: Cyber-physical Programming with Impromptu”. In Proc. OOPSLA.

- Cuthbert, M. S. & Ariza, C. (2010). “music21: A Toolkit for Computer-Aided Musicology”. In Proc. ISMIR.

- Huron, D. (2002). “Music Information Processing Using the Humdrum Toolkit”. Music Perception, 20(1).

- Nienhuys, H.-W. & Nieuwenhuizen, J. (2003). “LilyPond, a System for Automated Music Engraving”. In Proc. XIV Colloquium on Musical Informatics.

- Laurson, M. & Kuuskankare, M. (2002). “PWGL: A Novel Visual Language”. In Proc. ICMC.

- Trevino, J. (2013). “Compositional and Analytic Applications of Automated Music Notation via Object-Oriented Programming”. PhD Thesis, UCSD.

- MIDI Manufacturers Association (1983). MIDI 1.0 Detailed Specification. — The original standard.

- MIDI Manufacturers Association (2020). MIDI 2.0 Specification. — 32-bit extension, backward compatible.

- Good, M. (2001). “MusicXML for Notation and Analysis”. In The Virtual Score, MIT Press.

- Bel, B. (2001). “Rationalizing Musical Time: Syntactic and Symbolic-Numeric Approaches”.

- Bel, B. & Kippen, J. (1992). “Modelling Music with Grammars”. In Understanding Music with AI, 207-238.

- Lerdahl, F. & Jackendoff, R. (1983). A Generative Theory of Tonal Music. MIT Press.

- Steedman, M. (1984). “A Generative Grammar for Jazz Chord Sequences”. Music Perception, 2(1), 52-77.

- Cope, D. (1996). Experiments in Musical Intelligence. A-R Editions.

- Biles, J. A. (1994). “GenJam: A Genetic Algorithm for Generating Jazz Solos”. In Proc. ICMC.

- Prusinkiewicz, P. & Lindenmayer, A. (1990). The Algorithmic Beauty of Plants. Springer. — Chapter on music.

Links in the corpus

- I3 — SuperCollider in detail

- M3 — The six levels of abstraction

- M11 — TidalCycles and the Bel lineage

- B3 — Derivation rules and BP3 modes

- B4 — Flags and decremental weights

- B5 — Polymetry and temporal structures in BP3

- B7 — The BP2SC transpiler

- B8 — The three directions of BP3 (PROD/ANAL/TEMP)

- L1 — Chomsky’s hierarchy

- L13 — The generation/recognition duality

Glossary

- AC : Algorithmic Composition — an approach where software assists the composer with algorithms, without replacing their decisions. Lineage: Common Music → OpenMusic → PWGL → Opusmodus

- Dataflow : a programming paradigm where computation is a graph of data streams between processing nodes

- DSL : Domain-Specific Language — a programming language dedicated to a particular domain (here, music)

- Music engraving : the process of laying out a score according to professional typographic conventions. LilyPond automates this process

- JIT : Just-In-Time compilation — compilation of code at runtime. Extempore uses LLVM JIT to compile DSP live

- Live coding : a performative practice where the musician writes or modifies code in real-time in front of an audience

- MCSL : Mildly Context-Sensitive Language — a class of languages between context-free (Type 2) and context-sensitive (Type 1)

- MIDI : Musical Instrument Digital Communication — universal protocol (1983) for transmitting musical events between instruments and software. MIDI 2.0 (2020) extends resolution to 32 bits

- Mini-notation : TidalCycles’ compact syntax for describing rhythmic patterns within quotes

- MusicXML : standardized XML exchange format (W3C) for digital scores. Interoperability between Finale, Sibelius, MuseScore, LilyPond

- Pattern : in SuperCollider or TidalCycles, an object that generates sequences of values according to rules

- PCFG : Probabilistic Context-Free Grammar — a context-free grammar enhanced with probabilities on rules

- Polymetry : superposition of metric structures with different ratios (e.g., 3 against 2)

- Spines : in Humdrum, vertical columns of a \\kern file, each representing a voice or a musical dimension

- Strongly-timed : ChucK’s paradigm where time is a native type of the language, manipulable like any other data

- Transpiler : a program that converts source code from one language to another at the same level of abstraction

Prerequisites: M3, I3

Reading time: ~20 min

Tags: #musical-dsls #tidalcycles #faust #sonic-pi #csound #supercollider #haskore #openmusic #bp3 #live-coding #musical-grammars #musical-paradigms #chuck #common-music #extempore #music21 #lilypond #musical-analysis

Next article: M11 — TidalCycles: when the Bol Processor inspires live coding