L15) The Formulas of Asymmetry

From T_parse to Surprisal

In L13, we saw that generation and recognition are “operationally asymmetric.” But we remained at the level of intuition: O(n) versus O(n³), “generation is easier.” This article goes further: it dissects the formulas, one by one, to show that asymmetry is not a slogan — it is a mathematical result in six independent dimensions.

Where does this article fit in?

This article is the technical companion to L13. Where L13 presented asymmetry in accessible terms — puzzles, concerts, composers — this article presents the formulas that underpin it. It is an article in the L series (formal languages), with formulas at the forefront but always explained: no equation appears without stating what it means and why it matters.

If you are comfortable with the notations from L1 and L6, you will be on familiar ground.

Why is this important?

The intuition “generation is easy, analysis is difficult” is both true and misleading. True: there is a real complexity gap. Misleading: the true asymmetry is not a simple difference in difficulty — it is structural. The parser faces imposed constraints (the input is given, non-negotiable); the generator faces chosen constraints (it may or may not impose them on itself).

The formulas we will see don’t just say “one is slower than the other.” They show that asymmetry manifests along six independent axes — complexity, ambiguity, direction, information, inference, temporality — and that it is intrinsic to formal languages, not an artifact of a particular implementation.

The idea in one sentence

Generation-recognition asymmetry is formalized in six mathematically independent dimensions, from the complexity gap O(n) vs O(n³) to the surprisal S = 0 for the generator versus S > 0 for the parser.

Let’s explain step by step

1. The complexity gap: T_gen vs T_parse

Unconstrained generation is linear regardless of the grammar class: $T_{\text{gen}} = \mathcal{O}(n)$. Recognition, however, depends dramatically on the Chomsky level:

| Chomsky Level | $T_{\text{gen}}$ | $T_{\text{parse}}$ | Gap |

|---|---|---|---|

| Type 3 (regular) | $\mathcal{O}(n)$ | $\mathcal{O}(n)$ | none |

| Type 2 (context-free) | $\mathcal{O}(n)$ | $\mathcal{O}(n^3)$ | cubic |

| Type 2+ (TAG/MCFG) | $\mathcal{O}(n)$ | $\mathcal{O}(n^6)$ | power 6 |

| Type 1 (context-sensitive) | $\mathcal{O}(n)$ | $2^{\mathcal{O}(n)}$ | exponential |

| Type 0 (r.e.) | $\mathcal{O}(n)$ | $\to \infty$ | infinite |

Asymmetry grows with expressive power. But this one-dimensional view is misleading: in reality, each operation depends on two orthogonal axes — the grammar class and the task specification — with inverted sensitivity. This is differential coupling: generation is sensitive to the task (free vs constrained), recognition is sensitive to the grammar (Type 3 vs Type 0).

→ For the two complete complexity matrices and differential coupling, see L17.

2. Gen and parse as functions

We can formalize generation and parsing as two mathematical functions:

$\text{gen}: G \times D \to \Sigma^*$

Generation takes a grammar $G$ and a derivation sequence $d$ (the series of choices: which rule to apply at each step), and produces a string $w$. For a fixed $d$, the output $w$ is unique. The function is injective: one derivation path → one single string.

$\text{parse}: G \times \Sigma^* \to 2^{\mathcal{T}}$

Parsing takes a grammar $G$ and a string $w$, and returns a set of derivation trees $\{t_1, t_2, \ldots, t_k\}$. The notation $2^{\mathcal{T}}$ means “a subset of the set of all possible trees.” Three cases:

- $k = 0$ : the string does not belong to the language

- $k = 1$ : unambiguous parsing (only one tree)

- $k > 1$ : ambiguous parsing (several valid trees for the same string)

The parse function is non-injective: the same string can correspond to multiple structures.

In summary:

- gen : one path → one sentence (deterministic)

- parse : one sentence → multiple trees (non-deterministic)

3. Catalan numbers: why ambiguity explodes

When $k > 1$ (the string is ambiguous), how many derivation trees can we have? The answer is given by Catalan numbers:

$C_n = \frac{1}{n+1}\binom{2n}{n} \sim \frac{4^n}{n^{3/2}\sqrt{\pi}}$

$C_n$ is the number of ways to associate $n$ elements in pairs — which corresponds to the number of binary trees with $n$ nodes. In practice:

| $n$ (constituents) | $C_n$ (possible trees) |

|---|---|

| 2 | 2 |

| 3 | 5 |

| 4 | 14 |

| 5 | 42 |

| 10 | 16 796 |

| 15 | 9 694 845 |

For 3 prepositional phrases (“I saw the man with the telescope on the hill”), there are already 5 possible derivation trees. For 10, over 16,000. The growth is exponential: $\sim 4^n$.

Shared derivation forests (Billot & Lang, 1989) allow for compact representation of an exponential number of trees in $\mathcal{O}(n^3)$ space — but the number of underlying trees remains exponentially large. Non-determinism is compressed, not eliminated.

4. Parikh and intrinsic ambiguity

One might hope that by rewriting the grammar more intelligently, ambiguity could always be eliminated. Parikh’s theorem (1966) shatters this hope:

Theorem (Parikh, 1966). There exist inherently ambiguous context-free languages — languages for which no unambiguous grammar can be written.

The classic example:

$L = \{a^n b^n c^m d^m \mid n, m \geq 1\} \cup \{a^n b^m c^m d^n \mid n, m \geq 1\}$

This language is the union of two context-free languages. Any string of the form $a^n b^n c^n d^n$ (when $n = m$) belongs to both sub-languages — and this dual membership cannot be eliminated by any choice of grammar.

Brabrand, Giegerich, and Moller (2007) add an even stronger result: it is undecidable to determine if a grammar is ambiguous. One cannot even verify, in general, if a given grammar exhibits the problem.

5. The Birman-Ullman Paradox

Birman and Ullman (1973) observed a paradox:

“Transforming a Chomsky grammar into a parsing mechanism is considerably difficult; but transforming a parsing scheme into a generation mechanism is much easier.”

At first glance, this inverts the asymmetry. Isn’t generation more difficult?

The resolution lies in the distinction between transforming from one mode to another and operating within a mode.

A parsing scheme already contains the structural analysis — it has resolved ambiguities, explored the derivation space, encoded the recognition strategy. Converting it into a generator is an “informational downgrade“: one discards the analytical structure and keeps only the generative path. It’s easy because one discards information.

Converting a generative grammar into a parser is an “informational upgrade“: one must add the analytical machinery (lookahead, disambiguation, error recovery) absent from the grammar. It’s difficult because one creates information.

The Birman-Ullman observation therefore confirms the asymmetry: parsing contains more information than generation. The asymmetry of transformation is the mirror image of the asymmetry of operation.

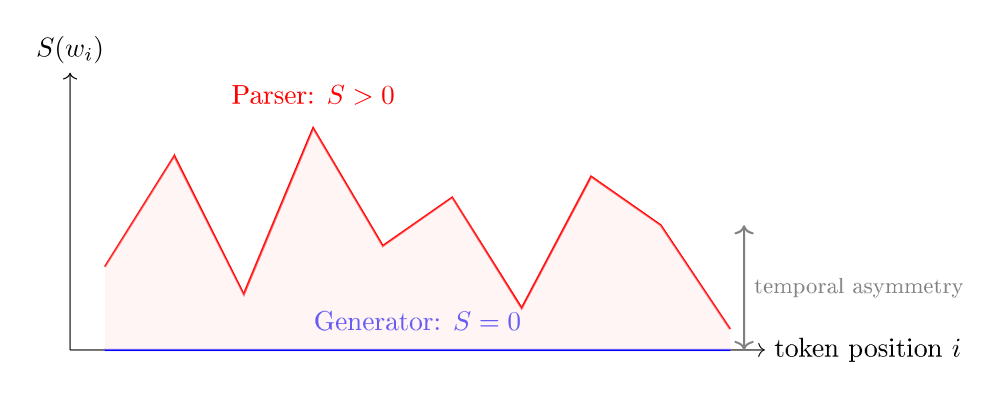

6. Surprisal: formalized temporal asymmetry

Perhaps the most surprising (pun intended) dimension is the temporal one. Hale’s surprisal (2001) formalizes it.

The surprisal of the $i$-th token is:

$S(w_i) = -\log_2 P(w_i \mid w_1, w_2, \ldots, w_{i-1})$

In English: “how many bits of information does this token provide, given what we have already seen?”. The more unexpected a token is, the higher its surprisal.

For a deterministic generator, the system knows which token it will produce:

$P_{\text{gen}}(w_i \mid w_1, \ldots, w_{i-1}) = 1 \quad \Longrightarrow \quad S_{\text{gen}}(w_i) = 0$

The generator is never surprised. It creates the future — it knows it in advance. Surprisal = zero, always.

For an incremental parser, the system has seen $w_1, \ldots, w_i$ but not what follows. It maintains competing hypotheses:

$P_{\text{parse}}(w_i \mid w_1, \ldots, w_{i-1}) < 1 \quad \Longrightarrow \quad S_{\text{parse}}(w_i) > 0$

The parser is surprised — with each token, it must re-evaluate its hypotheses. Surprisal > 0, always.

Surprisal quantifies temporal asymmetry: exactly zero for the generator, strictly positive for the parser. This is the mathematical formalization of the intuition: the composer knows what they will write; the listener discovers it as they go along.

[!Note: the analogue in signal processing]

In digital signal processing, a causal system at time $t$ depends only on past inputs ($\leq t$). An anti-causal system can access future inputs. The generator is causal (it creates the future); a parser without lookahead is also causal (it only sees the past), but a parser with lookahead is partially anti-causal — it looks into the future. Bidirectional encoders like BERT access the entire sequence: a pure analytical capability.

7. The summary table

Here are the six dimensions brought together in a single table:

| Dim | Name | Generation | Recognition | Key Reference |

|---|---|---|---|---|

| D1 | Complexity | $\mathcal{O}(n)$ | $\mathcal{O}(n^3)$ to undecidable | Aho et al. 1986 |

| D2 | Ambiguity | Deterministic, injective (1 path → 1 sentence) | Non-deterministic, $C_n \sim 4^n$ trees, non-injective (1 sentence → $k$ structures) | Parikh 1966, Brabrand 2007 |

| D3 | Direction | Always top-down | Free choice (LL, LR, …) | Shieber 1988 |

| D4 | Information | Complete knowledge | Inference from surface | Shannon 1948 |

| D5 | Inference | gen < recog < infer | — | Gold 1967 |

| D6 | Temporality | Causal : $S = 0$ | Predictive : $S > 0$ | Hale 2001 |

Key Takeaways

- The complexity gap grows with expressive power: from none (Type 3) to infinite (Type 0). The $T_{\text{parse}}$ formula by case unambiguously demonstrates this.

- Generation is an injective function (one path → one sentence); parsing is non-injective (one sentence → $k$ trees). Catalan numbers $C_n \sim 4^n$ quantify the growth of ambiguity.

- Ambiguity is intrinsic to certain languages (Parikh 1966) and undecidable in general (Brabrand 2007). It cannot be eliminated or even detected.

- The Birman-Ullman paradox confirms the asymmetry: transforming a parser into a generator is easy (informational downgrade), the reverse is difficult (informational upgrade).

- Surprisal formalizes temporal asymmetry: $S = 0$ for the generator (it creates the future), $S > 0$ for the parser (it predicts under uncertainty).

- The six dimensions are independent: each captures a distinct aspect of the generation-recognition divide.

Further Reading

Complexity

- Aho, A.V., Sethi, R. & Ullman, J.D. (1986). Compilers: Principles, Techniques, and Tools. Addison-Wesley — complexities of CYK, Earley, LL, LR.

- Hopcroft, J.E. & Ullman, J.D. (1979). Introduction to Automata Theory, Languages, and Computation. Addison-Wesley — fundamental results.

Ambiguity and Non-Injectivity

- Parikh, R.J. (1966). “On Context-Free Languages.” Journal of the ACM, 13(4), 570–581 — inherent ambiguity of CFLs.

- Brabrand, C., Giegerich, R. & Møller, A. (2007). “Analyzing Ambiguity of Context-Free Grammars.” Science of Computer Programming �� undecidability of ambiguity.

- Billot, S. & Lang, B. (1989). “The Structure of Shared Forests in Ambiguous Parsing.” ACL — shared derivation forests.

- Jurafsky, D. & Martin, J.H. (2023). Speech and Language Processing (3rd ed.) — Catalan numbers and ambiguity.

Birman-Ullman

- Birman, A. & Ullman, J.D. (1973). “Parsing Algorithms with Backtrack.” Information and Control, 23(1), 1–34 — the original paradox.

Surprisal and Temporality

- Hale, J. (2001). “A Probabilistic Earley Parser as a Psycholinguistic Model.” NAACL — surprisal as a model of processing difficulty.

- Levy, R. (2008). “Expectation-Based Syntactic Comprehension.” Cognition, 106(3), 1126–1177 — expectation-based comprehension.

- Stolcke, A. (1995). “An Efficient Probabilistic Context-Free Parsing Algorithm.” Computational Linguistics — probabilistic Earley parser, prefix probabilities.

Grammatical Inference

- Gold, E.M. (1967). “Language Identification in the Limit.” Information and Control, 10(5), 447–474 — the fundamental impossibility result.

- de la Higuera, C. (2010). Grammatical Inference: Learning Automata and Grammars. Cambridge University Press — comprehensive reference.

In the Corpus

- L1 — The hierarchy levels and their automata

- L6 — Operational Semantics: another mathematical formalism from the L series

- L9 — Type 2+: TAG, CCG, MCFG

- L13 — Overview of asymmetry (popularized version)

- L14 — The direction of parsing: LL, LR and the Dragon Book

- L16 — Why bidirectionality was not transferred

Glossary

- Inherent ambiguity : A property of a language (not a grammar) such that any grammar generating it is ambiguous. Discovered by Parikh (1966).

- Birman-Ullman (paradox of) : The observation that transforming a parser into a generator is easy, while transforming a grammar into a parser is difficult — seemingly the inverse of asymmetry, but in reality its confirmation.

- Causal (system) : In signal processing, a system whose output at time $t$ depends only on inputs at times $\leq t$. The generator is causal; a parser without lookahead is also causal.

- Catalan (numbers) : The sequence $C_n = \frac{1}{n+1}\binom{2n}{n}$ which counts the number of binary trees with $n$ nodes. Exponential growth $\sim 4^n / n^{3/2}\sqrt{\pi}$.

- Shared derivation forest : A data structure that compactly represents (in $\mathcal{O}(n^3)$) an exponentially large number of derivation trees. Non-determinism is compressed, not eliminated.

- Injective (function) : A function that maps distinct inputs to distinct outputs. Generation is injective in $d$ (one path → one sentence); parsing is non-injective (one sentence → multiple trees).

- PSPACE-complete : A class of problems solvable in polynomial space but for which no polynomial-time algorithm is known. Parsing context-sensitive grammars is PSPACE-complete.

- Surprisal : $S(w_i) = -\log_2 P(w_i | w_1 \ldots w_{i-1})$. Measures the information contributed by a token in context. Zero for the generator, positive for the parser.

- $T_{\text{gen}}$, $T_{\text{parse}}$ : Time complexity functions for generation and recognition respectively, parameterized by Chomsky level $k$ and length $n$.

Prerequisites : L1, L6, L13

Reading time : 15 min

Tags : #formulas #asymmetry #complexity #surprisal #Catalan #Parikh #Birman-Ullman