L13) Generate or Recognize

The Duality of Formal Grammars

A grammar is not only used to produce sentences. It can also analyze them. But these two uses — generation and recognition — are neither symmetrical nor equivalent. This asymmetry, rarely discussed, is nevertheless at the heart of music.

Where Does This Article Fit In?

In L1, we discovered the hierarchy of formal languages. In L2, we learned how to construct grammars that produce sentences by derivation. But we have always worked in a single direction: from meaning to sentences.

This article poses a rarely formulated question: what happens when we reverse the direction? When, instead of producing a sentence from a grammar, we receive a sentence and try to understand if it belongs to the language — and what structure the grammar assigns to it?

This is the difference between composing and analyzing, between speaking and listening, between encoding and decoding. And this difference is far from trivial.

Why Is This Important?

A Famous Terminological Trap

The most cited book in formal music theory is titled A Generative Theory of Tonal Music (GTTM, Lerdahl & Jackendoff, 1983 — over 5,000 citations). Yet, GTTM does not generate any music. It is an analytical theory: given an existing musical piece, it provides preference rules to assign a hierarchical structure to it (grouping, metric structure, temporal reduction).

The term “generative” is used in the original Chomskyan sense — a set of formally explicit rules — and not in the sense of “production.” Lerdahl himself clarified that GTTM models the listener (reception), not the composer (emission) [Lerdahl2009].

This confusion illustrates a deeper problem: we use the same word — “grammar” — for two fundamentally different activities.

Two Sides of the Same Coin

Every formal grammar defines a language — a set of valid strings. But it can be used in two ways:

| Direction | Technical Name | Question Asked | Musical Analogy |

| ——————– | ————————— | “What sentences can this grammar produce?” | The composer writes a piece |

| → Generation | Production / Derivation | | |

| ← Recognition | Parsing / Analysis | “Does this sentence belong to the language? What structure corresponds to it?” | The musicologist analyzes a musical production |

Mathematically, both uses define the same language: the set of strings is identical. But operationally, the two directions are radically different.

The Idea in a Nutshell

A formal grammar is a bidirectional object: it can generate sentences or analyze them, but the two directions differ along at least six independent dimensions — complexity, ambiguity, direction, information, inference, and temporality. The asymmetry is structural: the parser is always constrained, the generator is not necessarily so.

Let’s Explain Step by Step

1. Computational Asymmetry

The first difference is algorithmic in nature. For a Context-Free Grammar (CFG — L2):

- Generating a sentence is fast: one starts from the axiom (start symbol — the grammar’s starting symbol), applies rewriting rules, and each step is a local choice. The complexity is linear in the length of the derivation.

- Recognizing a sentence is more costly: given a string of $n$ symbols, determining if it belongs to the language and reconstructing its structure requires an algorithm like CYK (Cocke-Younger-Kasami) or Earley, in $O(n^3)$ time — that is, proportional to the cube of the input length.

For more expressive formalisms, the gap widens dramatically. Context-sensitive grammars (Type 1, L1) have a recognition problem that is PSPACE-complete (Polynomial Space complete — a complexity class for which no efficient algorithm is generally known) [Berwick1982].

In other words: producing is generally easier than analyzing.

But beware: this formulation is misleading. Unconstrained generation — applying rules randomly — is trivial for any formalism. The result is “grammatical” but useless. Real generation operates under constraints (a target meaning, a style, aesthetic rules), and constrained generation can be as difficult as parsing. The true asymmetry is structural: parsing is always constrained (the input is given, non-negotiable), while generation may or may not be.

→ For mathematical formalizations of this gap ($T_{\text{parse}}$ formula by Chomsky level, semi-log graph), see L15.

Sidebar: The Puzzle Analogy

Building a 1,000-piece puzzle from the complete image is relatively simple: you decide how you’re going to cut it, so you know which piece goes where. But receiving a mixed-up puzzle and figuring out how to assemble it — in what order, by what strategy — is an entirely different problem. The grammar is the reference image; generation builds the puzzle; parsing disassembles it to understand its structure.

2. Ambiguity Asymmetry

The second difference concerns structural ambiguity — a unique phenomenon that manifests in three related aspects:

Determinism. In generation, one can choose one derivation at each step (possibly randomly, as in probabilistic PCFGs — cf. B1). The result is a single sentence. In recognition, a sentence can have multiple valid analyses: the same sequence of words can correspond to several derivation trees (L4).

In linguistics, the sentence “I saw the man with the telescope” has two analyses: I used the telescope to see, or the man had the telescope. In music, the same harmonic progression can be analyzed in several ways by GTTM (M6).

Non-Injectivity. Formally, generation is an injective function: one derivation path produces a single sentence. Parsing is non-injective: the same sentence can correspond to $k$ derivation trees. Generation is a one-to-one process, recognition a one-to-many process.

Cardinality (Eco). Umberto Eco, in The Open Work (1962), formalized the same asymmetry in the aesthetic domain: a single production (the work) admits multiple interpretations (analyses). In music, the composer knows why they chose a particular note; the listener must infer that intention. The generation function converges (multiple intentions → one work), while the analysis function diverges (one work → multiple interpretations).

These three aspects — determinism, non-injectivity, cardinality — are one and the same phenomenon viewed from three angles.

3. Directional Asymmetry

The third difference is the most invisible — by dint of being familiar.

Generation has a fixed direction: it is intrinsically top-down. One starts from the axiom (the intention, the “what to say”) and descends towards the terminal symbols (the surface, the words or notes). As Chomsky formulated it: a grammar generates “top-down, not left-to-right.”

Parsing, on the other hand, has the choice of direction. It can be top-down (LL, recursive descent), bottom-up (LR, shift-reduce), or combine both (Earley, left-corner). The Dragon Book — the reference book in compiler design — dedicates entire chapters to this choice. A choice that exists only for the parser.

The most striking observation: the parsing strategy most different from generation (LR, bottom-up) is the most powerful. The one that mimics generation (LL, top-down) is the weakest.

→ This dimension is developed in detail in L14.

4. Information Asymmetry

The generator has access to everything: communicative intent, stylistic constraints, pragmatic context. The analyzer only has access to the observable signal — the sound surface, the sequence of symbols.

This is the fundamental problem of all musical analysis: creative constraints are available to the generator but must be reconstructed by the analyzer. Shannon’s model (§ below) formalizes this asymmetry at the most fundamental level: the encoder knows the source, the decoder does not.

5. Temporal Asymmetry: Offline vs. Real-time

The previous four asymmetries implicitly assume that all the necessary time is available — that one is working offline. But when the constraint of real-time is added, the asymmetry deepens.

- Generating in real-time is relatively natural: one produces symbol by symbol, as it flows. A composer improvising, a live coder modifying their code during a concert — each decision is local and immediate.

- Analyzing in real-time is much more difficult: one receives the signal as it comes, without knowing what follows. The analyzer must maintain multiple hypotheses about the current structure — this is the problem of incremental parsing [Hale2001].

In music, this asymmetry is omnipresent. A listener at a concert hears notes one by one. They cannot “go back” to re-analyze a passage. Their analysis is constrained by the flow — they must interpret in real-time, with partial information.

Sidebar: The Concert vs. The Score

A musicologist analyzing a Beethoven sonata has the complete score in front of them — they can go back and forth, compare distant passages, revise their interpretations. A listener at a concert only has the sound flow: each second erases the previous one (except in memory). Both perform “analysis,” but under radically different temporal constraints. The first works offline (all information available at once); the second works in streaming (partial, sequential, irreversible information).

The IDyOM model formalizes exactly this situation: it predicts the next note from the notes already heard, without ever seeing the future. It is an incremental decoder in Shannon’s sense — and that is precisely what makes it more difficult than generation.

→ Surprisal theory (Hale, Levy) mathematically formalizes this temporal asymmetry: see L15 §6.

6. The Bridge: Analysis by Synthesis

The analysis-by-synthesis paradigm, born in speech perception research in the 1960s, proposes a bridge between the two directions: to recognize a signal, one generates internal candidates and compares them to the received signal [HalleStevens1962].

Analysis contains synthesis as a subprocess. The receiver is not a simple passive filter: it possesses an internal generative model that produces hypotheses, tested against the input.

In music, this corresponds to the expert listener’s experience: when you hear a ii-V-I (the subdominant → dominant → tonic progression, for example Dm7 → G7 → Cmaj7 in C major), you anticipate the resolution because your internal model has generated that expectation. Models like IDyOM (Information Dynamics of Music — a musical prediction model based on corpus statistics) formalize exactly this predictive process [Pearce2018].

7. Reversible Grammars

Some formalisms are designed to work in both directions with the same grammar:

- GF (Grammatical Framework, Ranta, 2019) — a multilingual formalism where grammars are explicitly reversible, allowing both parsing and generation [Ranta2019]

- Unification grammars — used in bidirectional machine translation [vanNoord1990]

- Steedman (1984 → 2014) — the same CCG (Combinatory Categorial Grammar — L9) grammar for jazz progressions was used first to generate chord sequences [Steedman1984], then 30 years later to parse them (analyze them automatically) [GranrothWilding2014]. This is a remarkable example of the same musical grammar used in both directions — with very different computational challenges.

But reversibility is not automatic. Strzalkowski (1993) showed that a grammar designed for parsing requires transformations (an “inversion”) to be used for generation. The very fact that an inversion process is necessary proves the operational asymmetry [Strzalkowski1993].

→ Bidirectionality has existed for 50 years in NLP — why isn’t it everywhere? See L16.

8. In Music: Three Paradigms

The generation/recognition duality structures the entire field of computational musicology. Formal musical systems can be classified into three paradigms, according to the directions they support:

\usepackage{tikz}

\usetikzlibrary{calc, positioning}

\begin{document}

\definecolor{cAnal}{RGB}{41,128,185}

\definecolor{cGen}{RGB}{39,174,96}

\definecolor{cBidi}{RGB}{192,57,43}

\definecolor{cNeural}{RGB}{142,68,173}

\begin{tikzpicture}[scale=1.5, transform shape,

every node/.style={font=\scriptsize, text=black},

sysnode/.style={fill=#1!15, draw=#1!50, rounded corners=2pt,

inner sep=2pt, font=\scriptsize\bfseries, text=black}]

% Timeline axis

\draw[black!40, line width=0.8pt, -{latex}] (0,0) -- (12.5,0);

% Year ticks

\foreach \x/\yr in {0/1980, 2/1990, 4/2000, 6/2010, 8/2020, 10/2025} {

\draw[black!40] (\x, -0.1) -- (\x, 0.1);

\node[below, font=\tiny, text=black!60] at (\x, -0.15) {\yr};

}

% Legend

\node[sysnode=cAnal] at (1.5, 4.2) {Analytique};

\node[sysnode=cGen] at (4, 4.2) {G\'{e}n\'{e}ratif};

\node[sysnode=cNeural] at (6.5, 4.2) {Neural};

\node[sysnode=cBidi] at (9, 4.2) {Bidirectionnel};

%

% === ANALYTICAL (blue, top band) ===

\node[sysnode=cAnal] at (0.6, 3.4) {GTTM};

\node[font=\tiny, text=black!50] at (0.6, 3.0) {1983};

\node[sysnode=cAnal] at (4.8, 3.4) {Schenker};

\node[font=\tiny, text=black!50] at (4.8, 3.0) {2004};

\node[sysnode=cAnal] at (7.6, 3.4) {IDyOM};

\node[font=\tiny, text=black!50] at (7.6, 3.0) {2018};

\node[sysnode=cAnal] at (9.4, 3.4) {Finkensiep};

\node[font=\tiny, text=black!50] at (9.4, 3.0) {2019};

%

% === GENERATIVE (green, upper-mid band) ===

\node[sysnode=cGen] at (0.2, 2.2) {Holtzman};

\node[font=\tiny, text=black!50] at (0.2, 1.8) {1981};

\node[sysnode=cGen] at (1.6, 2.2) {Max};

\node[font=\tiny, text=black!50] at (1.6, 1.8) {1988};

\node[sysnode=cGen] at (3.2, 2.2) {SC};

\node[font=\tiny, text=black!50] at (3.2, 1.8) {1996};

\node[sysnode=cGen] at (4.4, 2.2) {OM};

\node[font=\tiny, text=black!50] at (4.4, 1.8) {1999};

\node[sysnode=cGen] at (6.8, 2.2) {Tidal};

\node[font=\tiny, text=black!50] at (6.8, 1.8) {2014};

%

% === NEURAL (purple, lower-mid band) ===

\node[sysnode=cNeural] at (8, 1.5) {Jukebox};

\node[font=\tiny, text=black!50] at (8, 1.1) {2020};

\node[sysnode=cNeural] at (9.5, 1.5) {MusicLM};

\node[font=\tiny, text=black!50] at (9.5, 1.1) {2023};

\node[sysnode=cNeural] at (11, 1.5) {Suno};

\node[font=\tiny, text=black!50] at (11, 1.1) {2023};

%

% === BIDIRECTIONAL (red, bottom band) ===

\node[sysnode=cBidi] at (0.8, 0.6) {Steedman};

\node[font=\tiny, text=black!50] at (0.8, 0.25) {1984};

\draw[cBidi!60, dashed, line width=0.5pt] (1.5, 0.6) -- (6.6, 0.6);

\node[sysnode=cBidi] at (7.2, 0.6) {parser};

\node[font=\tiny, text=black!50] at (7.2, 0.25) {2014};

\node[sysnode=cBidi] at (2.4, 0.6) {BP3};

\node[font=\tiny, text=black!50] at (2.4, 0.25) {1992};

\node[sysnode=cBidi] at (5.2, 0.6) {OMax};

\node[font=\tiny, text=black!50] at (5.2, 0.25) {2006};

\node[sysnode=cBidi] at (8.8, 0.6) {Harrison};

\node[font=\tiny, text=black!50] at (8.8, 0.25) {2019};

\end{tikzpicture}

\end{document}

[!FIGURE] Figure 1 — Chronological taxonomy of formal musical systems according to the directions they support: analytical (blue), generative (green), neural (purple), bidirectional (red). The dashed line between Steedman 1984 and the 2014 parser illustrates the 30-year gap between generation and parsing for the same CCG grammar.

The Analytical Paradigm — grammar as a listening tool. These systems receive an existing piece and determine a corresponding structure:

- GTTM (Lerdahl & Jackendoff, 1983): listener’s model, not composer’s (M6)

- Computational Schenkerian analysis (Mavromatis & Brown, 2004): parsing an existing piece to extract its deep structure

- IDyOM (Pearce, 2018): statistical model of musical prediction — the listener as an inference machine

- Melodic graph grammar (Finkensiep et al., 2019): melodic parsing of Hindustani music — an analytical tool applied to a non-Western repertoire

- Grammar-based compression (Humphreys et al., 2021): grammar induction as an implicit analytical tool — compressing a piece means extracting its structure

The Generative Paradigm — grammar as a composition tool. These systems produce music from rules or patterns, but cannot analyze:

- Musical generative grammars (Holtzman, 1981): pioneering use of context-free grammars for automatic composition

- Max (Puckette, 1988/IRCAM): visual dataflow programming for real-time audio — born at IRCAM, became the dominant platform for electroacoustic creation and interactive art

- SuperCollider (McCartney, 1996): open-source platform for synthesis and algorithmic composition — its pattern library (Pbind, Pseq, Prand…) constitutes a generative formalism embedded in the language. It is also the target of BP2SC (I1) and the host of TidalCycles (I3)

- OpenMusic (Assayag et al., 1999/IRCAM): computer-assisted composition environment — visual Lisp programming with constraint solving, used by many contemporary composers (Music Representation Team, IRCAM)

- Euterpea (Quick & Hudak, 2013): functional composition in Haskell — music as an algebraic data type

- TidalCycles (McLean, 2014): live coding with temporal patterns — direct heir of Bel (M11), runs on SuperCollider

- Musical L-systems (Roncoroni, 2021): Lindenmayer’s parallel grammars applied to generative remixing — an intrinsically unidirectional formalism

- CFG+Markov system (Kamberaj et al., 2025): CFG for chord progressions and Markov chains for melody — an entirely generative system

Beyond formal approaches, neural generation has transformed the landscape since 2020. These systems use no explicit grammar — musical “rules” are entirely implicit in the weights learned through deep learning:

- Jukebox (Dhariwal et al., 2020): raw audio generation via VQ-VAE (Vector Quantized Variational Autoencoder) — OpenAI

- MusicLM (Agostinelli et al., 2023): text-to-music generation via hierarchical sequence-to-sequence model — Google

- MusicGen (Copet et al., 2023): single-stage autoregressive transformer, text or melody conditioning — Meta

- Suno (2023): text-to-song generation accessible to the general public, including voice and lyrics — unpublished architecture

These systems are powerfully generative but entirely opaque: they can neither analyze an existing piece, nor explain their choices, nor expose the “rules” they have learned. The absence of explicit grammar is precisely what makes them inexplicable. The analytical cost has not disappeared — it has been shifted to the training phase (cf. L16).

The Bidirectional Paradigm — grammar as a bridge. These systems use the same grammar in both directions, but with highly variable degrees of integration:

- Jazz CCG (Steedman, 1984 → Granroth-Wilding, 2014): the same Combinatory Categorial Grammar for jazz progressions, first used to generate, then 30 years later to parse. A remarkable case — but the two directions were developed separately.

- EMI (Cope, 1989): Experiments in Musical Intelligence — the system analyzes a corpus to extract stylistic conventions, then generates new pieces in the same style. Both directions in the same system, but sequentially (analysis first, generation next).

- Bol Processor (Bel & Kippen, 1992): the most integrated case. BP3 has three modes of using the same grammar:

– PROD (production, modus ponens): the grammar generates musical sequences

– ANAL (analysis, modus tollens): the grammar tests if a sequence belongs to the language and, if so, identifies its structure

– TEMP (template exploration): the grammar exhaustively enumerates all possible derivation structures, producing structural skeletons that can optionally accelerate analysis (ANAL) or serve as a standalone design tool

- OMax/SoMax (Assayag, Dubnov et al., 2006/IRCAM): machine improvisation based on the Factor Oracle automaton — a formal automaton (Type 3) built in real-time from a musician’s playing (analysis), then traversed to generate improvised responses. The same formalism serves both directions, but analysis remains at the level of surface sequences (no deep structure)

- Antescofo (Cont, 2008/IRCAM): score following coupled with a reactive language for accompaniment generation — both directions work simultaneously in real-time, but rely on different formalisms (probabilistic models for analysis, synchronous language for generation)

- Unified voice-leading model (Harrison & Pearce, 2019): analysis and generation of voice leading within a single framework — a recent and rare case of explicit musical bidirectionality

The complete cycle by Bel and Kippen for tabla perfectly illustrates the duality: a musician produces sequences of bols (mnemonic syllables of the tabla — B2), the system analyzes these sequences to infer a grammar (via QAVAID, Question Answer Validated Analytical Inference Device — an interactive grammatical inference system), then this grammar can re-produce new sequences, which the musician evaluates. It is an analysis → grammar → generation → evaluation → analysis loop [KippenBel1989].

9. The Third Direction: Grammatical Inference

Beyond the generation/recognition duality, there is an even more fundamental third direction: grammatical inference (grammar induction) — given examples of sentences, find the grammar itself [delaHiguera2010].

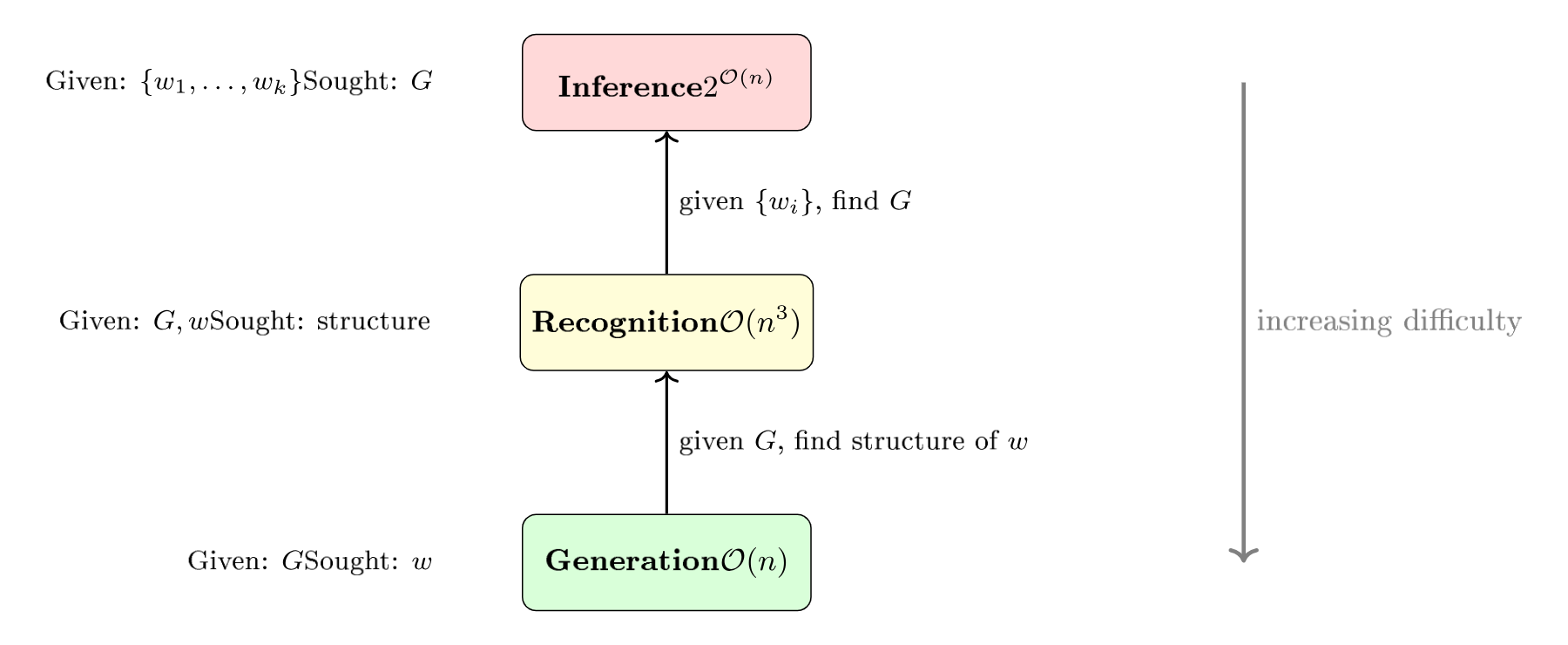

This is the inverse problem of formal language theory. The three operations form a hierarchy of increasing difficulty:

Generation ($\mathcal{O}(n)$) is the simplest: rules are applied. Recognition ($\mathcal{O}(n^3)$ for CFGs) is more costly: the structure is reconstructed. Inference ($2^{\mathcal{O}(n)}$) is the most difficult: the rules themselves are found from examples alone.

In music, this is exactly what a musicologist does when listening to a corpus and trying to extract the “rules” — stylistic conventions, harmonic constraints, rhythmic patterns. It is also what grammatical inference systems like QAVAID for tabla or the work of Cruz-Alcázar & Vidal for musical style identification do [CruzAlcazar2008].

This direction will be explored in detail in M10.

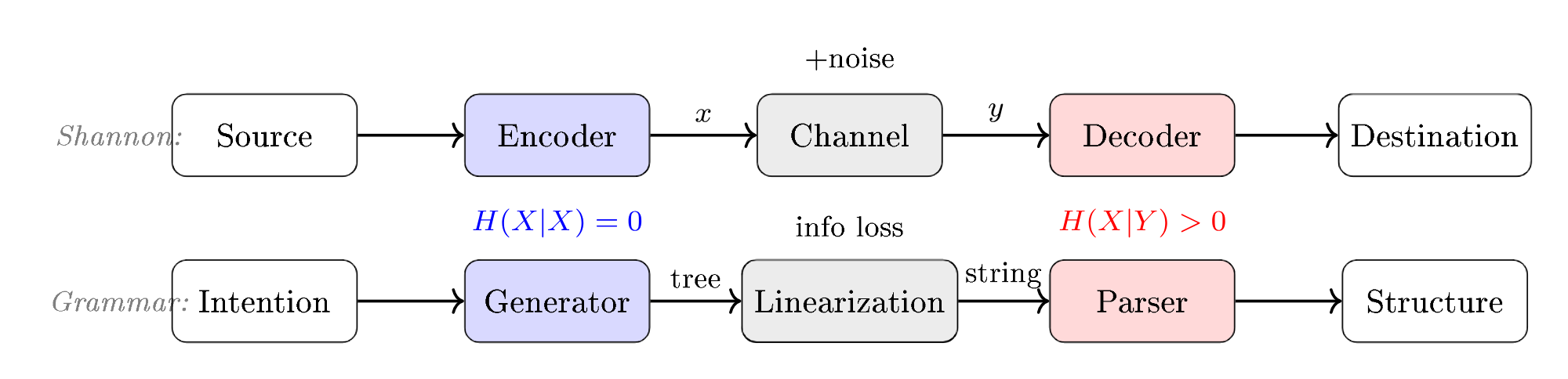

Shannon’s Model: Encoder $\neq$ Decoder

Claude Shannon’s communication model (1948) formalizes asymmetry at the most fundamental level. The diagram below shows the complete chain: a source produces a message, the encoder transforms it into a signal, the channel adds noise to it, and the decoder attempts to reconstruct the original message.

The encoder (generation) and the decoder (recognition) are not symmetrical:

- The encoder transforms a message into a signal — it is a choice among possibilities

- The decoder reconstructs the message from the noisy signal — it is an inference of the most probable

The channel introduces noise — uncertainty, loss. In music: air is a noisy channel, the ear is an imperfect decoder, the score is a lossy encoder (it does not capture timbre, touch, intention). Each translation between musical representations is an encoder → channel → decoder passage, with its own losses and ambiguities.

Key Takeaways

- Every grammar has two uses: to generate (produce sentences) and to recognize (analyze existing sentences).

- These two directions are mathematically equivalent (same language) but operationally asymmetric along six independent dimensions: complexity, ambiguity, direction, information, inference, and temporality.

- Generating is generally easier than analyzing: $O(n)$ vs $O(n^3)$ for CFGs, and the gap widens for more expressive formalisms.

- In real-time, the asymmetry deepens further: generating in streaming is natural (one produces as it flows), analyzing in streaming is difficult (one has not yet seen what follows — incremental parsing).

- Analysis by synthesis shows that recognition uses generation as an internal subprocess.

- In music, GTTM is analytical (despite its title), Steedman is bidirectional, and BP3 is explicitly reversible (PROD/ANAL/TEMP modes).

- Grammatical inference is the third direction: finding the grammar from examples — the fundamental problem of computational musicology.

Further Reading

Theoretical Foundations

- Berwick, R. (1984). “Strong Generative Capacity, Weak Generative Capacity, and Modern Linguistic Theories.” Computational Linguistics.

- Strzalkowski, T. (1993). Reversible Grammar in Natural Language Processing. Springer — foundational work on reversible grammars.

- de la Higuera, C. (2010). Grammatical Inference: Learning Automata and Grammars. Cambridge University Press — reference on grammatical inference.

Music

- Lerdahl, F. & Jackendoff, R. (1983). A Generative Theory of Tonal Music. MIT Press — the most influential analytical model.

- Steedman, M. (1984). “A Generative Grammar for Jazz Chord Sequences.” Music Perception — jazz grammar later used for parsing (Granroth-Wilding & Steedman, 2014).

- Puckette, M. (1988). “The Patcher.” ICMC — Max, real-time visual programming born at IRCAM.

- Bel, B. & Kippen, J. (1992). “Modelling Music with Grammars: Formal Language Representation in the Bol Processor” — the quintessential bidirectional system.

- Assayag, G., Rueda, C. & Laurson, M. (1999). “Computer-Assisted Composition at IRCAM: From PatchWork to OpenMusic.” Computer Music Journal, 23(3), 59–72 — constraint-assisted composition.

- Cruz-Alcázar, P.P. & Vidal, E. (2008). “Two Grammatical Inference Applications in Music Processing.” Applied Artificial Intelligence.

- Assayag, G. & Dubnov, S. (2004). “Using Factor Oracles for Machine Improvisation.” Soft Computing, 8(9), 604–610 — OMax, improvisation via Factor Oracle automaton (IRCAM).

- Cont, A. (2008). “Antescofo: Anticipatory Synchronization and Coupling.” ICMC — score following and reactive accompaniment (IRCAM).

- Finkensiep, C., Neuwirth, M. & Rohrmeier, M. (2019). “Generalized Graph Grammar for North Indian Melodic Syntax.” DLfM — melodic parsing applied to Hindustani music.

- Harrison, P.M.C. & Pearce, M.T. (2019). “A Unified Model for Voice-Leading Analysis and Generation.” — explicit musical bidirectionality for voice leading.

- Humphreys, G. et al. (2021). “Grammar-Based Compression for Music Analysis.” — grammar-based compression as an implicit analytical tool.

- Kamberaj, B. et al. (2025). “CFG-Based Chord and Markov Melody Generation.” — entirely generative system.

- McCartney, J. (2002). “Rethinking the Computer Music Language: SuperCollider.” Computer Music Journal, 26(4), 61–68 — the open-source platform for synthesis and algorithmic composition.

- Roncoroni, L. (2021). “L-Systems for Musical Remixing.” — Lindenmayer’s parallel grammars applied to musical generation.

Neural Generation

- Dhariwal, P. et al. (2020). “Jukebox: A Generative Model for Music.” arXiv:2005.00341 — raw audio generation via VQ-VAE (OpenAI).

- Agostinelli, A. et al. (2023). “MusicLM: Generating Music From Text.” arXiv:2301.11325 — hierarchical text-to-music generation (Google).

- Copet, J. et al. (2023). “Simple and Controllable Music Generation.” NeurIPS — MusicGen, single-stage transformer, text/melody conditioning (Meta).

Temporal Asymmetry and Incremental Parsing

- Hale, J. (2001). “A Probabilistic Earley Parser as a Psycholinguistic Model.” NAACL — foundational model of probabilistic incremental parsing.

Analysis-by-synthesis

- Halle, M. & Stevens, K.N. (1962). “Speech Recognition: A Model and a Program for Research.” IRE Transactions on Information Theory.

- Poeppel, D. & Monahan, P.J. (2011). “Feedforward and Feedback in Speech Perception: Revisiting Analysis by Synthesis.” Language and Cognitive Processes.

Deepening the Asymmetry

- L14 — The Direction of Parsing: LL, LR, and the Dragon Book (D3)

- L15 — The Formulas of Asymmetry: T_parse, surprisal, Birman-Ullman

- L16 — The Bidirectionality Paradox: 50 years of forgotten reversible grammars

In the Corpus

- L1 — Levels of complexity

- L2 — Grammars that produce

- L9 — Mildly context-sensitive formalisms (TAG, CCG)

- M6 — GTTM: the analytical paradigm applied

- M10 — Grammatical inference in music (upcoming)

- B6 — BP3 Wildcards: pattern matching in grammar

Glossary

- Analysis-by-synthesis: A recognition paradigm where the receiver generates internal candidates and compares them to the received signal. Analysis contains synthesis as a subprocess.

- ANAL (mode): Analytical mode of the Bol Processor — the grammar tests if a sequence belongs to the language (modus tollens).

- Structural ambiguity: Property of a sentence that admits multiple derivation trees for the same grammar. Frequent in recognition, absent in generation.

- Encoder / Decoder: In Shannon’s model, the encoder transforms a message into a signal (generation), the decoder reconstructs the message from the signal (recognition). They are not symmetrical.

- Reversible grammar: A grammar designed to be used in both directions (generation and parsing) without transformation. Examples: GF, unification grammars.

- IDyOM (Information Dynamics of Music): Statistical model of musical prediction based on corpus entropy. Models the listener as an inference machine.

- Grammatical inference (grammar induction): The “third direction” — finding the grammar from examples of sentences. The inverse problem of formal language theory.

- Modus ponens / modus tollens: In logic, modus ponens goes from the rule to the consequence (if A then B, A, therefore B — generative direction). Modus tollens goes from observation to premise (if A then B, B observed, therefore A plausible — analytical direction).

- NLG / NLU (Natural Language Generation / Understanding): The two sub-domains of NLP (Natural Language Processing) that embody the generation/recognition duality.

- Incremental parsing: Syntactic analysis that processes input symbol by symbol, as it is received, without waiting for the end of the sentence. Necessary for real-time analysis. More difficult than offline parsing because the analyzer does not have access to the rest of the signal.

- Parsing (syntactic analysis): The process which, given a sentence and a grammar, determines if the sentence belongs to the language and reconstructs its structure (derivation tree).

- PROD (mode): Production mode of the Bol Processor — the grammar generates musical sequences (modus ponens).

- QAVAID (Question Answer Validated Analytical Inference Device): Bel & Kippen’s interactive grammatical inference system for tabla. The name also means “grammar” in Arabic/Urdu.

Prerequisites: L1, L2

Reading time: 14 min

Tags: #grammars #duality #generation #recognition #parsing #analysis-by-synthesis #BP3